Introduction

In the Beginner Track, you learned what AI is, how it learns, and where it appears in daily life. Now you will start applying that knowledge to the financial services environment.

Financial services relies on accuracy, consistency and evidence. AI can support these demands when its fundamentals are well understood. This module explains the building blocks of AI through the lens of regulated work. You will learn how AI systems interpret financial language, why domain-specific data is essential, and what makes AI suitable or unsuitable for specific tasks.

You do not need to be technical. You simply need enough literacy to understand how AI behaves inside financial products, oversight processes and customer interactions. This module sets that foundation.

What you will learn

• The types of AI used in financial services

• Why domain-specific training is essential in regulated environments

• How AI reads, interprets and analyses financial language

• The difference between generic AI and financial grade AI

• The strengths and limitations of AI in financial work

AI is becoming embedded across the sector, although its role is very different from consumer-facing AI. Financial services involves regulated interactions, sensitive personal information and decisions with real consequences. This shapes the types of AI that can be used safely.

Why AI Fits This Environment

AI performs well in tasks that involve:

• Analysing large volumes of unstructured information

• Recognising patterns in conversations and documents

• Identifying potential risks earlier in the process

• Producing consistent outputs at scale

These capabilities support the core obligations of the sector. Firms are expected to deliver fair outcomes, maintain accurate records and demonstrate complete oversight.

Why AI Must Be Treated Differently

Unlike entertainment or retail, AI in financial services must be:

• Traceable so firms can show how decisions were reached

• Explainable so outputs can be understood and challenged

• Domain aligned so terminology and regulatory context are interpreted correctly

• Risk aware so errors do not introduce customer harm

Generic AI systems do not meet these expectations by default.

The Beginner Track introduced the AI family tree. In financial services, these concepts appear through specific applications.

1. Pattern Recognition Systems

These systems detect behaviours or signals that match patterns they have been trained on.

Common uses include:

• Identifying possible fraud in transaction streams

• Detecting conversational cues for dissatisfaction or vulnerability

• Spotting anomalies in suitability documents

Strength: Reliable consistency at high volume

Limitation: Performs best when training data covers the real world accurately

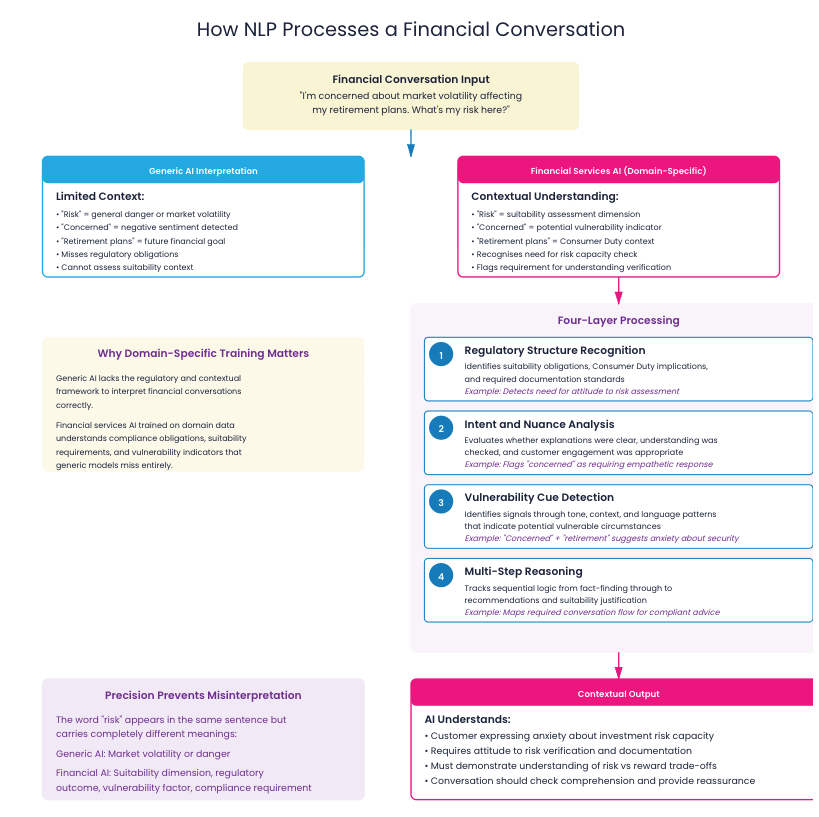

2. Natural Language Processing (NLP)

NLP allows AI to read and interpret human language. This is essential in a sector built on conversations, explanations and written records.

Typical uses:

• Extracting key facts from advice files

• Summarising adviser and client conversations

• Interpreting tone, sentiment and risk indicators

• Classifying content for compliance and QA

Why this matters for financial services:

Financial language carries regulatory weight. Words and phrasing signal obligations, explanation quality and evidence. AI must be trained on these norms to interpret them correctly.

3. Large Language Models (LLMs)

LLMs power many advanced AI applications. They can:

• Summarise complex documents

• Generate structured narratives

• Interpret long conversations

• Identify patterns across large datasets

LLMs trained on general internet data often:

• Misinterpret regulated terminology

• Invent plausible sounding statements

• Struggle with the structure of advice and suitability processes

• Miss subtle indicators that matter for regulatory outcomes

These limitations are why domain-specific LLMs exist. They are trained on documents, conversations and regulatory language that reflect actual financial work.

4. Classification and Scoring Models

These models assign labels or risk scores to interactions, statements or documents.

Examples include:

• Complaint detection

• Vulnerability identification

• QA triage

• Suitability or quality scoring

Benefit: Firms can move from sampling a small percentage of calls to reviewing every interaction

Challenge: Requires continuous calibration and monitoring

Meeting transcription and summarisation:

AI captures adviser-client conversations, extracts key facts, and generates structured meeting notes.

Benefit: Reduces 90-minute admin tasks to 15 minutes while improving accuracy.

Suitability report generation:

AI creates compliant reports from meeting data and client information.

Benefit: Consistent quality across all advisers, faster turnaround for clients.

Compliance monitoring:

AI reviews 100% of interactions rather than 10% samples.

Benefit: Earlier detection of conduct risks and vulnerable customer indicators.

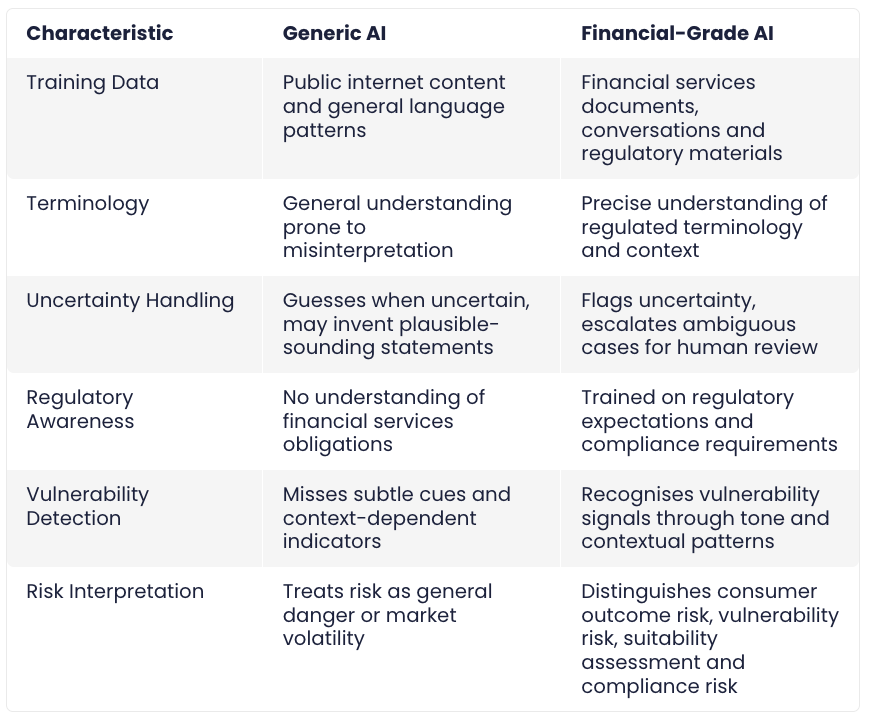

Generic AI is powerful, although financial services requires precision. A misinterpreted sentence or incorrect explanation can lead to customer harm or regulatory intervention.

The Limitations of General Models

General models often:

• Guess when uncertain

• Rely on patterns from public data that do not reflect financial reality

• Miss subtle regulatory indicators

• Confuse financial terminology

• Produce statements that sound confident but are incorrect

For example, a generic model might:

• Treat references to risk as market risk rather than consumer outcome risk

• Misinterpret vulnerability cues

• Invent regulatory guidance that does not exist

These issues arise because general models have not been trained on financial services data or regulatory expectations.

Financial language is structured and context dependent. AI must learn how these patterns operate.

What AI Needs to Understand

- Regulatory structure

Language often implies obligations, expectations or suitability criteria. - Intent and nuance

Phrasing can signal whether explanations were clear or whether customer understanding was checked. - Vulnerability cues

These often appear through tone or context, not specific keywords. - Multi-step reasoning

Advice processes involve sequential logic from fact finding to recommendations to suitability justification.

Domain-specific models learn these relationships directly from financial services data.

Example:

Understanding “Risk” Generic AI might interpret “risk” as:

– Market volatility

– Investment risk

– General danger

Financial services AI understands “risk” contextually:

– Consumer Duty outcome risk

– Vulnerability risk factors

– Suitability assessment risk

– Regulatory compliance risk

This precision prevents misinterpretation that could lead to poor outcomes.

Strengths

• Ability to analyse every interaction, not only samples

• Consistent application of standards across advisers and teams

• Reduction in administrative overhead

• Earlier and more reliable surfacing of potential risks

• Structured insights produced from unstructured conversations and documents

• Support for fair outcomes through greater clarity and accuracy

Limitations

• Requires high quality and relevant training data

• Must be monitored for drift as regulations or processes evolve

• Cannot replace regulated judgment

• Can misinterpret unusual or ambiguous cases

• Needs an oversight framework for safe operation

AI is effective when paired with the right governance and human review.

AI adoption is not about replacing processes. It is about improving scale, accuracy and oversight. To use AI effectively, firms need clarity on three points.

1. What AI is trained to do

AI performs best when its scope is specific and well defined.

2. What AI is not designed to do

Understanding limitations prevents inappropriate or risky use.

3. Where human judgment remains essential

High stakes decisions require human involvement even when AI assists with analysis.

This clarity supports safe and confident adoption across the organisation.

You have completed Module 1 of the Intermediate Track. You now understand the following.

AI in Financial Services

• AI must be accurate, explainable and trained on finance specific material

• Generic models are not enough for regulated use

Types of AI Used in FS

• Pattern recognition supports fraud detection and risk identification

• NLP interprets financial language and conversation signals

• LLMs can summarise and analyse long content when trained on domain data

• Classification models help firms achieve full oversight instead of small samples

Domain Matters

• Financial language carries specific meaning

• Domain-specific training reduces the risk of misinterpretation

• General models often produce inaccurate or unsafe outputs in FS contexts

Strengths and Limits

• AI improves consistency and scale

• AI still requires monitoring, appropriate training and human judgment

• AI effectiveness relies on understanding both its capabilities and its boundaries

What Comes Next

You are ready for Module 2: Why AI Matters for Your Role.

In that module you will explore:

• How AI supports advisers, compliance teams, risk teams and operations

• Where AI fits into each stage of the customer and advice journey

• How AI can improve daily work without replacing professional judgment

• Practical examples of AI as a tool for productivity and quality

You will begin connecting AI fundamentals to the specific responsibilities within your role.