FinLLM Case Study: Aveni Detect Vulnerability Detection

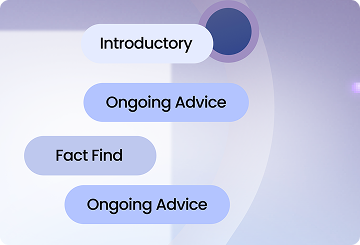

Financial advisers talk to clients for a living. Those conversations cover a lot of ground: investment options, pension planning, life changes. But sometimes, buried in an hour-long call, a client mentions something that matters in a different way. A health diagnosis. A job loss. A relationship breakdown.

These are signs that a client may be vulnerable. And catching them matters. Under FCA rules, firms have a duty to identify and respond to vulnerability early. But listening back through thousands of hours of recorded calls is not something a human team can do at scale.

That’s where AI comes in.

Aveni Detect already uses AI to flag moments in adviser-client calls where a client shows signs of vulnerability. But we wanted to improve how that works, using a model built specifically for financial services.

So we ran a test.

We put our in-house language model, FinLLM, head to head with some of the biggest names in AI, including GPT-4o from OpenAI. The question was simple: could a smaller, specialist model built for UK financial services outperform a much larger, general-purpose one at this specific task?

The answer was yes.

FinLLM correctly identified vulnerability in calls at a higher rate than GPT-4o, despite having a fraction of the parameters (think of parameters as the raw “brain size” of an AI model). It didn’t just flag whether a vulnerability was present. It pinpointed where in the transcript it appeared, reading the full hour-long call in one go rather than chopping it into smaller pieces.

The result: faster, more accurate vulnerability detection, with lower costs than relying on external AI providers. And for compliance teams, that means more confidence that nothing is falling through the cracks.

Read the full case study to see the benchmark results and how we built it.