In this webinar, Aveni’s CEO, Joseph Twigg, Head of NLP, Iria Del Rio and Chief Client Officer, Robbie Homer-Plews, held a live Q&A bootcamp as a crash course in AI fundamentals in financial services. You can watch a replay of the session here. Full transcript below:

Joseph: We’ve got Iria here. Iria is the brains behind the operation here at Aveni, as our principal AI engineer. And she’s also incredibly patient and a great communicator. So we’re lucky to have Iria here, who’s going to answer all of your questions I’ve no doubt.

And also Robbie, who spends his whole life with our clients and really brings that client perspective. So what questions are they asking? What level of understanding do they have? And really, you know, that conduit between this base level understanding and what a client should know.

And I’ll hopefully fill in the gaps.

So what we’ve done: we asked everyone attending for their questions and we’ve taken those questions and lined them up into this PowerPoint deck and we’re just going to step through each of these questions and try and answer them.

If you’ve got additional questions, please ask them along the way and we’ll do our best to answer them.

Right, let’s get into this. Iria, there were a few questions here which are all about the basics: What is AI? How does AI machine learning interrelate? Where does natural language processing fit in? Now we’re all talking about large language models, what are they?

So paint the picture for us. What are we talking about here?

Iria: Thanks, Joseph. Hello everybody and I’m going to try to keep this at quite a high level for you so that you have a general grasp of all these concepts and hopefully, after the webinar, you will have a better understanding of how they fit with each other and are related.

To be honest, there’s so much going on. Even for people working in the field and on NLP for years, it’s difficult to keep track of all the things that are happening, all the new concepts appearing all the time. So yes, it’s understandable for people who haven’t worked in this area to understand what’s going on.

Going through the relationship between all these concepts, basically artificial intelligence is the general field and it focuses on creating systems that are human-like; they have the same level of intelligence as humans. So that’s the idea for artificial intelligence.

Machine learning is just a subfield of artificial intelligence, and machine learning focuses on different algorithms to find patterns that allow you to relate an input and output.

Imagine that you have to, for example, classify images. And you have to classify them into cats and dogs. So your input could be the image and your output could be: OK this is a cat or this is a dog. What machine learning algorithms do is learn patterns in those images to be able to say, OK, this is a cat, this is a dog.

There are different ways of learning those patterns. They all involve maths and different complexities. You know different approaches, statistical and mathematical approaches to do that.

Deep learning on the other hand is a subfield of machine learning and it uses what’s called a neural network. I’m not going to get into details of what a neural network is because it’s quite complex, but you can use the idea that neural networks were originally broadly inspired on how the brain works. So the idea was to use the brain architecture. The idea that neurons are connected with each other and learn creating patterns between those neurons. When you’re learning something it is not just one neuron, it’s millions of neurons that are creating patterns in your brain to learn a new concept.

The same applies for neural networks, you have what’s called neurons or nodes, billions of them, that are connected with each other and learn statistical representations of information. For example, they learn patterns about how an image is a cat or how language works. So that is how deep learning works.

And large language models are basically just a really big neural network. They have billions of those neurons inside them.

Joseph: What’s a large language model?

Iria: A large language model is basically a neural network and what makes it different from other models that have been created before is that it’s really big. So it’s been trained on lots and lots of data. And also it has billions of neurons, or nodes, internally.

To put this into perspective, your brain has around 100 billion neurons and a model like GPT-3 has 175 billion of these neurons or parameters. So that’s what makes them so powerful, that they are really big and they’re training really big amounts of text.

This is a general graph to show how we got here. You can see in 2018 we had much smaller models. Those models had more or less the same architecture. So they were built in the same way. BERT uses what is called the transformer. So it’s a way to put together these neurons and different strategies to create this architecture.

BERT has transformers already on it, but it was much smaller than the current large language models. It was 340 million parameters. But in the middle of 2020 GPT-3 was launched and it had 175 billion parameters.

Basically what we’ve seen in the last few years is an increase in size, but the architecture, what is behind, is similar to what it was in 2019.

Joseph: And GPT-4 is rumoured to be in the trillions.

Iria: Yes, I don’t think it has been confirmed, but it has been kind of confirmed that it’s a mix of models. It’s not just one model, it’s a mixture of different models working at the same time. So it’s even more powerful.

Joseph: That’s large language models and the basic definitions of AI. So what does ‘training a model’ mean?

Iria: This is kind of like trying to encompass how an LLM is built and how LLM is trained.

There are 3 components: the first step is when the LLM basically learns a representation of language in general. So it learns to speak, or, if you want – to write. And how it learns that, is a thread technique that consists of predicting the next word.

These models are exposed to millions and millions of pieces of text from the internet, books, news, probably data from Twitter, data from all sorts of sources that are available on the internet. And those models are trained on a simple task of predicting the next word. So given a certain word, the model predicts the next one, then the preceeding two words. The model predicts the third one, and so on and so forth.

So that’s what they learned in this pre-training phase, and they can learn that for natural languages like English, Spanish. They can learn that or for other types of languages like programming languages, for example.

That’s the pre-training phase where they learn a statistical representation of language, but that is not enough because all of us have played with models like GPT-3.5, right? And we know that this model is aligned to do specific things. So if we ask it to just summarise this text, the model understands what we’re asking. These large language models are then trained in specific tasks. They learn how to perform specific tasks. For example, summarisation or following instructions. I tell you to summarise this text and the model is able to do that.

And finally, a very important step is alignment. These models are aligned to provide answers that are the type of answers that we expect as humans. And also aligned so the answers are politically correct and acceptable.

We have all seen how a lot of people were trying to break GPT-3, asking all sorts of very politically incorrect questions like, ‘how can I create a bomb?’ ‘How can I poison somebody?’ And things like that, right.

The funny thing is, the model probably has the knowledge to answer that. But it’s at this stage that models are aligned to not say certain things or to say certain things in a certain way. There are different ways of doing that, ChatGPT, for example, use reinforced reinforcement learning through–

Joseph: Through human feedback?

Iria: Yes, it’s the other way around here, it’s human feedback. Basically, you have a bunch of humans reviewing the outputs of the model and saying ‘this is the one that you should be giving’, ‘this is not correct’ etc. That is quite expensive to do and one of the dangers of it is that you still have biases from those humans telling the model what is the best answer.

Another approach that was introduced by Anthropic recently is a constitutional AI. The idea here is that a group of humans write a set of principles that establish which type of answers the model should be providing, how the model should be behaving, and then the model learns that itself. There’s no human involved there, or it can be other models evaluating what the model is doing, but only using that set of principles, or what was written, that kind of constitution.

And that’s all.. It seems simple, it’s not. But usually all large language models have these three steps.

Joseph: Thanks, Iria. So that’s basically the basics, we’re starting to get a little bit technical here with large language models.

Next we’re going to come to some questions that are relevant to the financial services industry. Financial advice in particular.

‘How will AI cope with the highly regulated FCA financial advice market without falling foul of GDPR?’ This is a constant theme that we come across.

Questions around data: How does AI fit in with GDPR and process of responsibilities. How do you instil confidence in both advisors and consumers around the storage of their personal information? These three questions are also interrelated.

Robbie, we’ll come to you first on this. What’s the general perception from clients large and small in this space?

Robbie: I get all the fun questions, don’t I? You could probably have a whole webinar dedicated to this topic, to be honest. It’s quite an interesting area. I think there’s probably a couple of things worth clarifying.

Obviously there’s two mentions of GDPR in both of those questions. It’s worth pointing out that firstly, not all AI systems actually process any client data. The second point is GDPR obviously predates a lot of the technologies in the last few years. The Data Protection Act, which came in in 2018, which implemented the GDPR E regulation in the UK didn’t actually mention artificial intelligence. It’s not mentioned there whatsoever, but there are a number of provisions within the regulation, which do relate to AI and some of them are just general principles. So there is a mention there of a data controller or a data processor.

From a data controller perspective, normal rules apply. You have to define the purpose of how you’re going to be using the data, what’s it going to be for? You have to go through a process of doing data protection impact assessments on how the data is going to be used, what the risks are to individuals as well as meeting the legal requirement of establishing that legal base for the data processing.

So if you’re going to be processing client data through any system via AI or otherwise, you need that legal basis. Usually that’s in the form of consent. We mentioned financial advice. If you’re using AI in some capacity to be a meeting assistant with a client, you will need to have that client’s permission to do so. And that can be verbal, that can be verbal in the meeting capturing that consent, that can be eventually updating terms of engagement, terms and conditions. But there has to be a legal basis for that processing.

Where it gets interesting with AI in particular is there’s an obvious tension between the regulation and the technology. So the regulation in particular, GDPR dictates that you have to minimise the use of client data. You have to limit the purpose of that data, and there’s obviously a tension there between what the regulator is saying and how this sort of technology works.

We’ve just talked about large language models and the amount of billions, trillions of data points, which are used to actually power those models. How do you align with data minimisation and purpose limitation of the regulation if you’re doing that? And I think this is obviously where it falls more on the data processor. So, how you are using client data at each stage of the model training journey. As Iria said, there’s pre-training, there’s training, live use, and at each stage you may well use different pieces of client data.

I think from our perspective and how we typically operate is minimising as much as possible the use of client data throughout each phase and that might be through anonymisation wherever possible, that might be through just general limitation agreement between us and our customer of how we use that data and why. And I think Iria will probably jump in here but there’s a lot of misconceptions in terms of this technology. In how it’s used. This isn’t usually ChatGPT. This isn’t putting data into a black box, which will then surface elsewhere. If you’re going to use this technology properly, use it in a controlled manner where data that you’re using for purposes of training models or in a live setting are actually only used in effectively small doses and are never stored. Never power the wider application, as it were. So typically how Aveni operates is the models that we use, they’re almost like closed circuits. The data that goes into that is never retained. So the actual minimisation is achieved through the way that we use the technology.

I don’t know, Iria, if there’s anything else you want to add more from the, I guess, processing side?

Iria: No, I think you explained it very well. The only aspect is sometimes we get these questions from people. Like, does the model somehow retain or learn automatically when you interact with it, and they don’t. What can happen is that–and that’s where you have to read the terms and conditions, which we do of course at Aveni when we use the language models, but I can understand some users don’t–is that certain providers can actually tell you that they are going to use snippets or parts of your text, to later on train a new model or new version of the model. But that doesn’t mean that when you are interacting with a model, it’s retaining your data.

But it’s also funny, Robbie, that people are very worried about this, but it’s true that large language models, like all the GPT models, have been trained on a lot of personal data because they didn’t go through the job of anonymising all the data on the internet.

Robbie:Yeah, exactly. All those YouTube videos.

Joseph: I think in the context of what technologies are openly available, ChatGPT you should not be sending personal client information to because ChatGPT, as OpenAI explicitly says in their terms and conditions, will retain the information for model training purposes.

You can access the same models via Azure, via Microsoft, and they don’t retain any of your information and you won’t be breaching any GDPR rules because it can be processed in the UK or EU. Just thought I’d bring that to life. I know there’s been quite a few instances where we’ve been told, “I’ve just been sending this to ChatGPT,” and it puts the fear of God in you.

Next question, ‘what’s the difference between vertical and horizontal AI?’ We actually refer to this quite often in our own narrative as a business. At Aveni, we are vertical AI, we’re vertically aligned. We only work in financial services. Our USP as a business is the way we bring financial services expertise alongside the technology expertise and build products and services around financial services.

So vertical AI is essentially AI applications, tooling and models that are all aligned behind a specific vertical. Horizontal is your ChatGPT, Gemini, Claude, these huge large language models that are designed for many purposes. And have an astonishing array of abilities across multiple modes, whether it’s text, generating pictures, now generating natural sounding voices.

They are astonishing. These big large language models. But you don’t need to be able to recite anything in the style of Shakespeare to deliver for UK financial services use cases. What you do need is a higher degree of transparency, a high degree of performance to be able to look through all the data that models have been trained on. And prove to the regulator there’s no bias. You know it’s being trained on appropriate data sets, etc.

What we believe is gonna happen over the next few years across different verticals, obviously not just FS, companies are going to emerge that really drive the adoption of AI in that vertical because each vertical is different, each vertical has specific needs.

Financial services is very heavily shaped by the regulator and that really has to be taken into account, especially if you want to transition away from just these Copilot use cases, into anything that’s AI first. You cannot deliver an AI first use case with a black box in a regulated environment like UK FS.

Onto the next question, ‘What are the typical or most successful AI applications that you’ve seen in the industry? E.g. is it more within the GI space for fraud detection or perhaps for large retail organisations for customer servicing?’

I think both of the above. What you’re seeing, in five years time: customer contact centres, large scale, high frequency contacts situations will be fundamentally transformed. We can very much replicate with existing technology the role of that agent and I think that’s true for relatively basic financial services and everything that comes with that in terms of your people ringing up to find out where their card is or whatever.

And the most interesting things that I’ve seen, if you think about that GI space, I’ve come across a whole range of different startups in the broader ecosystem and some of the insurance sort of focus startups that are leveraging space tech, i.e. sending satellites to space and then using AI to create predictive analytics around a whole range of different industries for a whole range of different purposes.

Things like, you know, forest fires in the US when a fire starts, the wind direction. How likely is it going to be that it’s going to reach certain areas? How does that translate into insurance based decisions for those areas? These things are becoming very real time and yeah, it’s super innovative applications of the technology, I don’t know, Robbie, Iria, if you’ve got anything else to add there?

Robbie: I think what’s been interesting, if you take the FS space closer to home, is traditionally, as a business you set out strategy of what your main core objectives that you want to achieve as a business and then you’ll decide how technology can enable you to hit those goals.

I think what we’ve seen, particularly in the last 12 months, is a bit of a shift and a bit of a flip of that model in a lot of organisations putting together AI councils or putting people into fairly senior positions, who are essentially responsible for the implementation of AI in the business. Which is a little bit of a funny way around of doing it.

It typically starts with the challenge and thinking about the best technology to meet that objective. Now it’s, well, let’s just find a way to bring AI regardless of whether that’s the right technology or not.

I think as you kind of alluded to there earlier, Joseph, that whole Copilot use case is an obvious starting point. I don’t remember the last time I’ve got out a notepad and paper with meetings. I just don’t take notes. I do that and my wrist hurts, I’m not used to it. And there’s always starting points with this and I think, the most successful has been where you can achieve quicker wins in a short space of time.

Joseph: I think the big difference, in terms of the impact of large language models, the performance of ChatGPT, GPT-4, etc and others is it can genuinely replicate quite a lot of white collar work. And whether it can do so with the level of accuracy to fully replace humans at the moment is question one, but it’s frighteningly close already in a lot of different areas.

Right. Next question. What are the major risks to be aware of?

Iria: I want to talk about large language models specifically–they are designed to generate answers. To generate text. So they are designed to predict the next word, to always say something.

That can be slightly risky because when you’re using them, they are always going to try to tell you something. They are always going to try to answer your question, even when they don’t have an answer. So unless you are touching a point that is actually part of the alignment, clearly the model has been trained on not talking about X or Y. And if that is not the case, they’re going to try to tell you something, and they’re going to generate something. And that relates to hallucinations.

These models can make up stuff. And we all have seen that. Like the model, when you question it actually it can keep trying to justify the answer, or sometimes it says, “Oh yeah, you’re right about that, that was not actually accurate.” That can happen as well but it’s basically the nature of the model. The model is designed for that, for saying something, sometimes not the most accurate thing.

And the other aspect that is also problematic is the bias. All the model is trained on is a lot of text, that text it’s probably not filtered, so it’s trained on a lot of text from the internet, and it is in the subsequent phases when the model is more aligned as explained before. And that means that all the prejudices, misconceptions, all the fake information there is on the internet, that we are exposed to every day, is also there.

You can have systems for recruiting people that tend to only recruit white males for technical jobs, for example. Why? Because they have only been exposed to that data. So the model can be built with a lot of bias in it.

And also there’s the other danger that is you try to reduce that bias and you end up creating a model that gives you ridiculous answers, as it has happened quite recently with Gemini. I think we all have read about it and this idea of “woke AI” and how by trying to create a model that is not biassed, you create a model that is very biassed in the other direction, and it’s actually inaccurate, and gives you fake answers. So all those aspects are, I think, one of the most you know risky aspects if you take them then to a very regulated industry like financial services, they can even be bigger

Joseph: And that sort of underpins the argument, doesn’t it, for vertical AI and, smaller, more highly tuned, much more transparent models to deliver the outputs here.

Iria: Understanding the data on which the model was trained is very important.

Joseph: Robbie, were you going to say something there?

Robbie: I was just gonna ask Iria. You mentioned bias there on both extremes. Obviously it’s very difficult in practice to eliminate bias entirely. So in an applied setting where the outputs of a model are going to drive decisions, how do you alleviate the risk of inaccurate information, biassed information, from impacting that end decision?

Iria: There are lots of techniques. In the first place, as I said, part of the training of the model, that alignment part is very important. So create training data that allows you to align the model towards what you want. And that’s very important, especially in an industry like ours.

If you have control over that, that’s really good. If you don’t, you are using a language model that has already been trained. You can do a lot with prompt engineering. We have seen that a lot in Aveni, with our own products. You can control a lot, the tone, you can control all of the outputs of what’s happening through prompt engineering.

It’s a lot of work and tests. Evaluating is very important because you can have the impression with these large language models that you can create a product in an afternoon. But you can’t create a stable product in an afternoon. That’s the other side of the coin.

And the other aspect is also traceability. If you can, for example in our case, we work sometimes that the model makes decisions over certain inputs over certain text, so if you can trace back to the text where you know is the source of the answer of the model. That helps a lot.

Joseph: II think you may have touched on this, but what are the main ethical considerations to consider? I mean are we back into the sort of biassed questions here?

Iria: Yeah, it’s related to bias and, you know, both extremes, right? Like the bias that can be coded in the model and the bias that can be introduced when trying to fight against it.

You have to find a balance, and you find that balance through testing the model a lot, and trial and error, and then again you can mitigate it through all the techniques I mentioned before.

There are other ethical considerations, like the model replacing people, but that happens with every technology. It’s not only AI and LLMs, it’s with every technology. It always has happened since the industrial revolution, people have been replaced by machines.

Joseph: And that’s a big topic, definitely for another time.

‘Who is developing large language models for the investment and wealth industry?’

I think we’ve got a bit of a breakdown here.

Iria: They have started to appear in the past years, we had models in 2018 already that were created from language models, like smaller, like BERT, for example or ELECTRA. So those models use BERT as a basis, and then they were trained on more financial services data or fine-tuned on financial services data. And more recently we have seen the same tendency but with large language models.

We have cases for example FinMA. It uses LLaMA as a base model, so it uses an open source, large language model. It has 730 billion parameters because you have the two versions of LLaMA.

There’s another one called FinTral that uses Mistral as the base large language model. And usually these are startups like us, or there are like research groups that use open source models and then use a corpus of financial services data or they fine tune into specific tasks using again financial services data.

A different case is BloombergGPT. This was created from scratch. Bloomberg have a lot of data of course, so they had the capacity to create a model from scratch and train it on general data and also on their internal financial services data.

So I think it’s a trend that as you were mentioning, Joseph, this change from horizontal models that work for everything to domain specific models. We have started to see it more and more. And I think as more open and more powerful open source models appear, it’s going to continue happening.

Do you want to talk a little bit about FinLLM.

Joseph: Yes, FinLLM should be on this list. This is a large language model that we’re building with some of our partners. And again, the difference between creating a massive horizontal model is we don’t just want data for this thing to consume and feed it data, feed it data, feed it data.

What we want from a financial services specific perspective is to understand the use cases that we’re trying to resolve, understand the limitations of existing models and existing approaches and then very clearly train and tune models to solve those specific problems.

So if we’re at 7 or 30 billion parameters, I think that’s probably a reasonable size to solve some of the major gaps to adoption within the UK Financial Services. It’s super exciting and I guess watch this space.

A question from an attendee: ‘what’s involved in the onboarding of AI tech? Is it very labour intensive?’

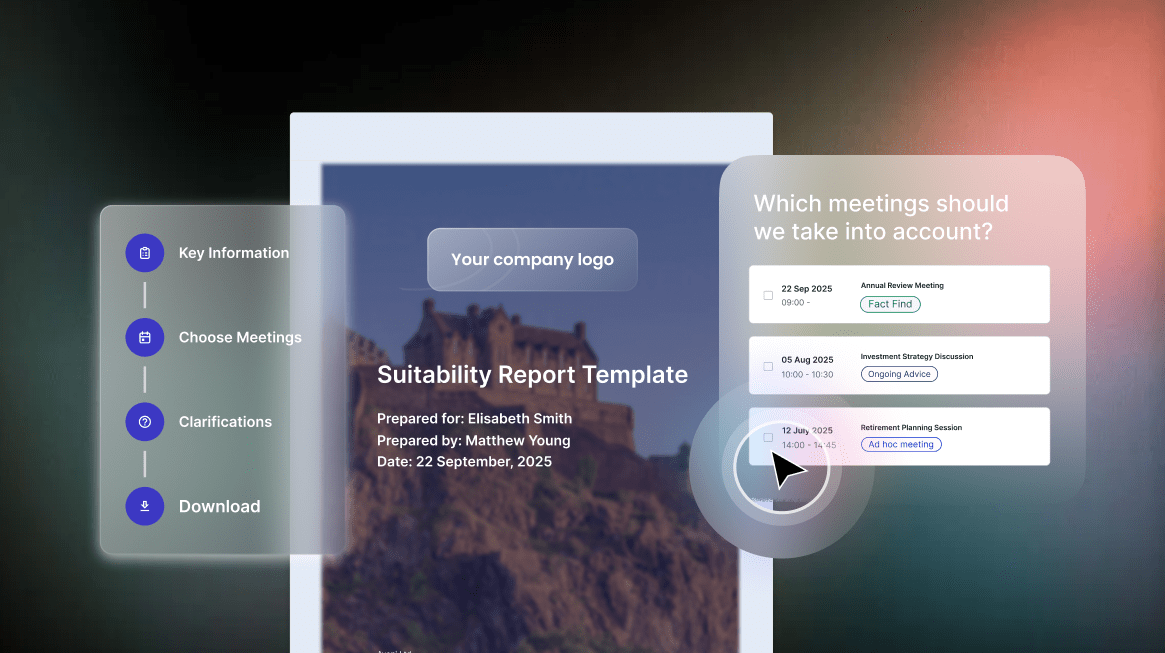

I think the answer to that is that it can be, but it can also be 5 minutes, you know, in the case of ourselves, we have one product that you can provide permissions to your video conferencing solution, permissions to your calendar and then our bot attends your meetings and you’re off. It can be as quick as that.

And I think it will become much easier for most consumers of this tech through their existing stack, whether that will be your CRM system, your platform, your back office system, whatever it is. That’s where you should really look for this tech, because then it’s easy, you can just turn it on.

Robbie: A couple things I would add to that, Joseph, is just that I’ve come across this a lot in conversations recently is that, probably more of the SME market, think this is technology for the bigger players, more of the enterprise organisations when the reality is actually mid market firms actually are really well placed to take on AI technology. They’ve not got as rigorous a governance process necessarily as others. And as you said, it can be really quick to get started.

I think the one thing I would add is whilst it’s very quick to get started, AI is not magic. If you want to get the best out of technology like this, there is a bit of effort involved to ensure that you engage feedback, tune performance to get it to where it needs to be. But it’s not necessarily hugely intensive. We’re talking in the region of weeks or a short number of months.

Joseph: And the impact could be potentially significant.

One other question: ‘I understand the difference between horizontal and vertical AI, but how does that translate to the actual user and what differences would I see?’

I think that’s a pretty good question actually. You know the practical, tangible differences you’d see as an end user of FinLLM or a vertically aligned large language model are all about performance, so you know it’s more accurate, more of the time. It’s reliable enough to be deployed in, for example, AI first customer service. You can trust it. You can look through to the the underlying data.

But as an end consumer on a day-to-day basis, the difference you’d see is an improved performance and we test our own internal models against GPT-4 on a regular basis. We’re not precious. If GPT-4 outperforms our models, we will use that. But in the vast majority of cases our models outperform GPT because they’re designed to classify very specific tasks.

One more question: ‘what do you think a day in the life of a financial advisor will look like in 10 years time?’

Substantially different is the answer to that, and I think obviously there’s also a range of potential outcomes here. I’m still a really big fan of human centred, human first financial advice in complex FS. I think you know that’s going to be around for a very long time. So I see some AI automation, i.e. some AI advice, but very, very, very efficient human-centred, human-first advice in that space in particular.

Robbie: Yeah I think on that, obviously the advice gap is a problem which has never, never gone away. Obviously AI is well placed to take care of low risk advice, GIA, ISA, restricted settings, not necessarily carry the same complexity as at retirement inheritance trust.

And like you say, in those sort of situations where you’re talking about your life’s worth, your life savings, do you really want to put it in the hands of a machine? No, you don’t.

Joseph: Yes, exactly.

Iria: And we also see, there’s so many things that can be done with large language models. I think the first thing that people think is like, oh, this is going to take my job, this is going to replace me, right? Because it’s what we have been fed with movies all our life. They’re going to take over and destroy the world. But there’s so many boring things and so many manual things that are done in financial services that can be done with large language models and improve the life of people working in that domain, we see that every day. So I think that’s a big part of it.

Iria: Leading times are what they do very well.

Joseph: Very good point, Iria.

Right. This is the fun bit. We’ve got 5 more minutes. Let’s jump into this.

We’ve got three or four recent themes that have emerged. One of them is this sort of sentient, generally intelligent AI that effectively is going to take over the world and you know, out evolve humans. So, Iria, is this tomorrow, next month, next year, next day or next century?

Iria: It’s happening as we’re talking. It’s happening already. I don’t want to say I’m pessimistic. I think I’m more like a scientist in this sense, I have the scientist mind that is like, “What do you mean by that?” It’s just that, right? Like, what do we mean by being sentient? And there’s not even a definition of that. Have people been discussing more in the light of large language models about what is general intelligence, there’s no definition of consciousness. There’s no definition of general intelligence, even for humans. When you have to measure the intelligence of somebody, there are different psychological frameworks, and there are different definitions of intelligence. So it’s not even clear what that means.

What we humans love is to talk with our dogs, put names to our plants, and humanise behaviours that doesn’t mean that people think that is behaving intelligently.

So I think there is a difference in there that we have to keep in mind. Like we tend to humanise behaviours, especially if there is a linguistic exchange involved. We can’t talk with a machine. We tend to think oh it’s smart, you know, but I don’t think we are there. That doesn’t mean we’re not gonna get there. It’s a different thing.

Joseph: Yeah, exactly. And I think the main thing for me is, the main comfort I take is, you know these large language models, although they seem so intelligent, don’t actually understand anything. They have no ability to truly understand any context. It’s just regurgitating something it’s learned.

Iria: There’s a very interesting paper that was written by somebody from Google. I think they then fired that person but he was talking about parrots because they just repeat what they have learned before.

Joseph :Exactly, highly efficient parrots. There you go.

Right. This is an interesting one. Hot off the press. This is the image generator within Gemini. The latest version of Gemini from Google. That was so woke it would represent historical images inaccurately by creating Nazis of different racial backgrounds. And there’s multiple different examples of this: the founding fathers of the US didn’t look like the founding fathers of the US. What’s going on here? And how did Google manage to embed such social and political influence within their large language model?

Iria: I don’t understand what happened here! I was thinking about it, with the amount of data and talent they have, it’s quite surprising they got to this. But I think the explanation we have is what we were talking about before, it’s trying to correct so much of a certain behaviour that you go to the other extreme, and you go wrong in the other direction and you become a little bit ridiculous. Like doing these things so politically correct that are just nonsense.

Joseph: Last but not least, we’re coming up to time: could AI displace the financial advisor? Lots of little articles on this and lots of chat around it. My personal view on this is no new technology would need to be invented to completely replace financial advice with machines. I think you could probably train and tune models to deliver very accurate financial plans across multiple layers of complexity.

The real question is who wants that? I personally feel people, if you look at the robo advice experience, there’s a culture, an investment in the wealth management culture in the UK that it’s very people first. And I don’t know how long that’s going to last, but it’s definitely the case at the moment.

I don’t know, Robbie, if you’ve got a different take on this?

Robbie: No, I think it goes back to what we said earlier. I don’t really know anyone who would want this. Certainly not across the board. I think there’s definitely low hanging fruit that robo-advice has unfortunately not managed to get a hold of. But I don’t think it’s coming for the job of financial advisor at this moment in time.

Joseph: I’m very conscious of time. We’ve just gone over Robbie, Iria, thank you so much for your insight. Super useful. If anyone has any additional questions or wants to talk to us–Iria, that’s what I mean by us–please don’t hesitate to get in touch. We’ll send a copy of this webinar around but thanks Iria. Thanks Robbie. And thanks everyone for attending!