Updated September 2025

Six years ago, BERT sparked a revolution in how machines understand language. Today, that revolution has evolved into something more powerful and purpose-built for financial services. At Aveni, we’re leading this evolution with FinLLM, the UK’s first large language model designed specifically for the regulated financial sector.

Understanding where we came from helps illuminate where we’re going.

The BERT Foundation: Why Context Changed Everything

In 2018, Google released BERT (Bidirectional Encoder Representations from Transformers), a model that fundamentally changed natural language processing. Unlike previous approaches that processed text in one direction, BERT considered context from both sides of a word simultaneously.

Consider these two sentences:

- “I went to the bank to withdraw cash”

- “I sat down at the riverbank”

Earlier models assigned the same numerical representation to “bank” in both cases. BERT understood that context matters. The words surrounding “bank” determine its meaning, and any effective language model must account for this complexity.

This contextual understanding proved transformative. BERT allowed machines to distinguish subtle variations in meaning that previous models missed entirely. Financial services, with its technical terminology and regulatory language, stood to benefit significantly from this advancement.

The Evolution: From BERT to Modern LLMs

BERT’s success spawned an entire ecosystem of specialised models. FinBERT emerged for financial sentiment analysis, trained on financial news and reports to better understand market language. Recent research shows that FinBERT achieved accuracy levels of 99.7% on financial classification tasks, demonstrating the power of domain-specific training.

The transformer architecture that powered BERT became the foundation for larger, more capable models. GPT-series models introduced generative capabilities, whilst models like RoBERTa and ALBERT refined BERT’s approach. By 2025, the landscape has shifted from single-purpose models to sophisticated large language models that can handle multiple tasks simultaneously.

Research published in 2025 confirms what the industry already knew: BERT-based models excel at structured classification tasks, whilst GPT-based models perform better at generation and real-time interpretation. The financial services industry needs both capabilities, wrapped in governance frameworks that meet regulatory requirements.

→ Discover how generative AI is transforming financial advice workflows in 2025

Why Generic LLMs Fall Short in Financial Services

General-purpose language models face significant limitations in regulated environments:

Regulatory Risk: Models trained on general internet data lack understanding of FCA guidance, Consumer Duty requirements, and financial regulations. Recent studies show that 84% of UK financial firms identify “safety, security and robustness of AI models” as their primary constraint to AI adoption.

Data Sensitivity: Financial institutions cannot send confidential client data to external APIs without rigorous controls. Generic models require organisations to compromise on data residency and privacy.

Accuracy Requirements: Hallucinations that might be acceptable in creative applications become unacceptable risks when providing financial guidance. FCA fines tripled to £176 million in 2024, whilst the EU AI Act now imposes fines of up to 7% of annual global turnover for AI non-compliance.

Domain Knowledge: Financial language differs substantially from general language. Terms like “Consumer Duty,” “suitability,” and “vulnerability” carry specific regulatory meanings that generic models fail to capture accurately.

Enter FinLLM: Purpose-Built for UK Financial Services

In May 2025, Aveni unveiled FinLLM, the UK’s first domain-specific large language model built for financial services. Developed in partnership with Lloyds Banking Group and Nationwide, FinLLM represents a fundamental shift in how AI serves regulated industries.

Unlike generic models retrofitted for financial use, FinLLM was designed from the ground up with financial services requirements at its core:

Domain-Specific Training: FinLLM is trained on financial services data, including regulatory documents, compliance frameworks, and industry-specific terminology. This specialised training enables the model to understand context that general-purpose models miss.

Regulatory Alignment: Built to align with FCA guidance and the EU AI Act, FinLLM incorporates compliance requirements into its architecture rather than treating them as constraints applied later.

Safety Framework: The September 2025 iteration of FinLLM introduced a comprehensive safety framework specifically designed for agentic AI adoption. This framework governs autonomous decision-making in customer interactions whilst maintaining regulatory compliance.

Performance Benchmarks: Extensive testing shows FinLLM consistently outperforms general-purpose LLMs on financial tasks whilst maintaining strong performance on standard benchmarks.

→ Explore how FinLLM’s safety framework enables responsible AI adoption

From Research to Real-World Impact

The evolution from BERT to FinLLM demonstrates a crucial lesson: foundational research provides the tools, but domain expertise creates practical value.

FinLLM powers Aveni’s flagship products, Aveni Detect and Aveni Assist, which serve some of the UK’s leading financial institutions. These applications demonstrate how domain-specific AI delivers tangible benefits:

Compliance Monitoring: Aveni Detect uses FinLLM to provide 100% monitoring of customer interactions, identifying regulatory risks that traditional sampling methods miss. Recent implementations have helped firms increase QA coverage from 2-3% to complete oversight.

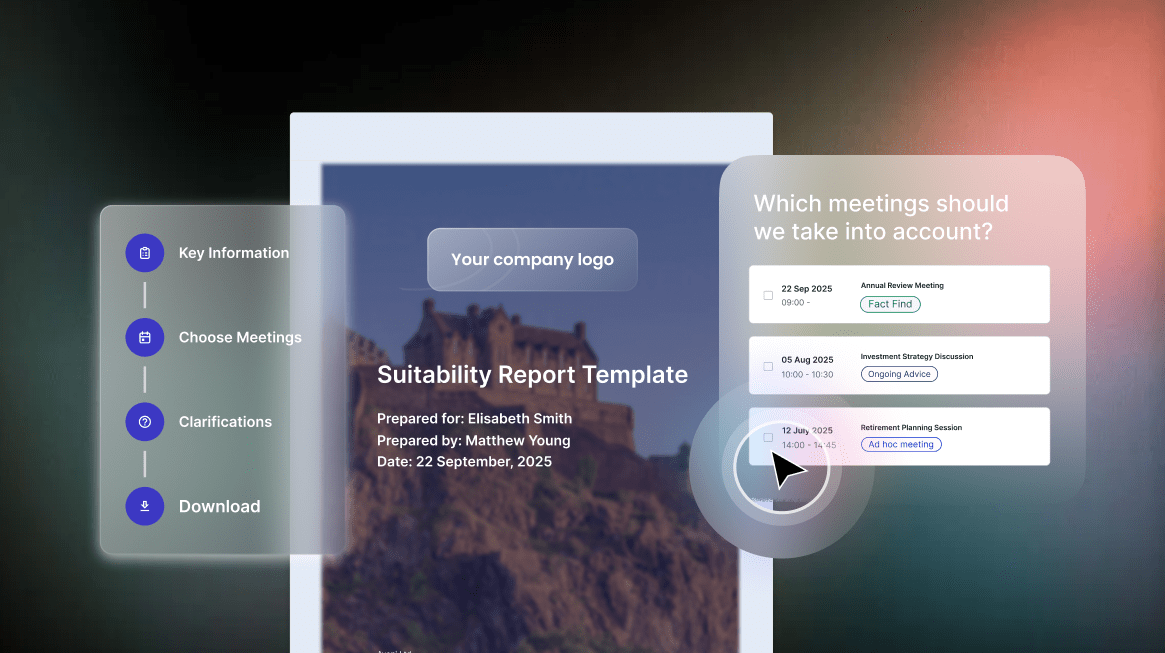

Adviser Productivity: Aveni Assist leverages FinLLM to automate meeting notes, CRM updates, and suitability report generation, saving advisers 75-80% of post-meeting administration time.

Regulatory Certainty: Both products incorporate built-in compliance checks aligned with Consumer Duty requirements and FCA expectations, reducing regulatory risk whilst increasing operational efficiency.

→ See how Aveni Detect addresses the 10 biggest quality assurance challenges

The Path Forward: Agentic AI in Financial Services

The September 2025 iteration of FinLLM marks another significant step forward. Developed with NVIDIA’s NeMo platform and accelerated computing, this version establishes safety standards for agentic AI adoption.

Agentic AI represents the next frontier: autonomous systems that can take actions, make decisions, and interact with customers without constant human oversight. In financial services, this capability requires unprecedented levels of trust, transparency, and control.

FinLLM’s safety framework addresses these requirements directly. It enables financial institutions to deploy AI agents for customer service interactions whilst maintaining regulatory compliance and customer protection standards.

Research from 2025 indicates that 50% of digital work in financial institutions will be automated using large language models. The institutions that succeed will be those that adopt domain-specific models with proper governance frameworks rather than generic solutions with retrofitted controls.

→ Learn why Aveni’s FinLLM sets new standards for enterprise-grade compliance

Practical Implications for Financial Services Leaders

For compliance officers, heads of operations, and transformation leaders evaluating AI adoption, the journey from BERT to FinLLM offers clear guidance:

Domain Specificity Matters: General-purpose models trained on internet data cannot match the accuracy and regulatory alignment of purpose-built financial models. Domain-specific training is essential for regulated applications.

Governance Cannot Be an Afterthought: Safety frameworks must be built into model architecture from the beginning, not added as external constraints later.

UK AI Sovereignty: As regulatory requirements intensify, access to UK-based models that align with local regulations becomes increasingly important. Dependence on US-based generic models creates strategic risk.

Proven Track Record: Look for solutions with demonstrated performance in live financial services environments, backed by major institutions that have validated the technology through real-world use.

The Verdict: From Research to Enterprise Reality

BERT proved that contextual understanding transforms language processing. The past six years have shown that transforming foundational research into enterprise value requires domain expertise, regulatory alignment, and practical implementation experience.

At Aveni, we’ve taken the lessons from BERT and built something specifically for financial services. FinLLM represents the convergence of world-leading NLP research and deep financial services expertise, creating AI that financial institutions can trust.

The evolution continues. As agentic AI becomes reality, the institutions that will lead are those that adopt purpose-built solutions with comprehensive safety frameworks. The choice facing financial services leaders is not whether to adopt AI, but which AI to adopt.

Ready to explore how domain-specific AI can transform your operations whilst maintaining regulatory compliance?

→ Discover Aveni Detect and Aveni Assist for AI-powered efficiency without complexity.

Aveni is an award-winning AI fintech building enterprise-grade solutions for UK financial services. Backed by Lloyds Banking Group and Nationwide, Aveni’s FinLLM sets new standards for safe, ethical AI adoption in regulated environments.