AI on Trial: The Burden of Proof

The accountability shift in AI governance

Walk into most financial services firms and ask whether they are using AI responsibly. You’ll hear about governance frameworks, oversight policies, and compliance sign-off processes. Ask whether they can prove that any individual customer conversation handled by AI went the way it should have, and the answers grow noticeably softer.

That hesitation points to where the industry actually stands in 2026. Two years ago, the question firms were asking about AI was simple: can we use it? That question has been overtaken by a harder one. Can you prove it is working correctly, for every customer, every day?

Under Consumer Duty, SMCR, and the FCA’s ongoing Mills Review, that burden exists from the moment the model is switched on. Firms must proactively demonstrate that their AI performs in customers’ interests. Regulators are not required to find a problem first.

The distinction that matters for every senior manager is not between firms that have experienced AI failures and those that have not. It is between firms that can prove, right now, that nothing harmful is happening and that they would know immediately if it were, and those that cannot. Most firms can answer the first question, given enough time to investigate. Very few can answer the second.

Four forces converging in 2026

The accountability question is not new. What is new is the pressure converging around it from four directions at once, each making the gap between deploying AI and governing it harder to ignore.

The regulatory reckoning

In January 2026, the House of Commons Treasury Committee published its report on artificial intelligence in financial services. The findings were direct. Despite 75% of UK financial services firms now using AI, the Committee concluded that regulators’ approach left consumers and the financial system exposed to potentially serious harm. Committee Chair Dame Meg Hillier stated she did not feel confident the financial system was prepared for a major AI-related incident.

That same month, the FCA launched the Mills Review, examining the long-term impact of AI on retail financial services. The Review introduced the concept of a “proxy economy,” in which consumers increasingly interact with financial services through AI intermediaries rather than directly with firms themselves. Recommendations are expected to reach the FCA Board in summer 2026. Comprehensive, practical guidance on how Consumer Duty and SMCR accountability apply to AI is expected by the end of year.

Then there is the EU AI Act, whose high-risk provisions take effect in August 2026. UK firms are not insulated from this by geography. If your AI systems touch EU markets or EU customers in any way, the August 2026 deadline applies to you.

The deployment gap

The adoption numbers look promising; however, the execution numbers tell a different story. The FCA and Bank of England’s November 2024 joint research found that while 75% of UK financial services firms are using AI, only 2% of AI use cases run without human sign-off on individual decisions. McKinsey’s 2025 global survey found just 1% of firms describe their AI strategy as mature.

On AI agents specifically, NVIDIA’s January 2026 survey of over 800 financial services firms found only 21% had actually put AI agents into live production. The biggest barriers were governance concerns and data quality. Most firms want to do more with AI than they can currently stand behind.

The boardroom confidence deficit

Risk awareness at board level is high. The infrastructure to manage that risk is still developing. McKinsey’s March 2026 survey found nearly two-thirds of organisations cite security and risk concerns as the main barrier to expanding their use of AI agents, and that across almost every risk category, firms are better at identifying the risk than they are at actually managing it.

Under SMCR, a named senior manager is personally liable for what AI systems do within their area of responsibility. The FCA has confirmed that handing a decision to an algorithm does not transfer that liability. FCA Executive Director David Geale told the Treasury Committee that individuals are “on the hook” for AI harm under SMCR. Yet what a senior manager must actually do to demonstrate adequate oversight of an AI system remains undefined. There is no specific FCA guidance on it and no enforcement cases that set a precedent. That gap is a risk in itself.

The generic AI problem

Every major technology platform is pushing AI deployment faster. The tools are increasingly accessible and the commercial pressure to adopt is intense. What is almost entirely absent from that conversation is the question of how firms prove the AI they have deployed is safe, consistent, and compliant.

The FCA has been explicit. Its InvestSmart guidance states that general-purpose tools like ChatGPT and Gemini are not set up to assist with financial decisions and are not regulated. A 2025 Which? investigation tested six AI tools against 40 common consumer financial questions and found that all four major general-purpose models provided dangerous financial guidance, including recommending exceeding ISA contribution limits and giving incorrect tax advice. Neither ChatGPT nor Copilot corrected a deliberate error about ISA limits that could have led to HMRC penalties.

Generic AI deployed into a regulated environment does not come with accountability infrastructure. Governance, audit trails, explainability, and regulatory alignment have to be built deliberately. That is not a feature most platforms are selling. It is the space where purposeful, sector-specific accountability infrastructure becomes the differentiator.

The five governance gaps

These are the governance gaps that exist in most firms deploying AI today. Each one represents a question a regulator could reasonably ask, and that many firms currently struggle to answer.

Deploying AI agents you cannot supervise

SMCR’s Senior Manager Conduct Rule 2 requires named individuals to take reasonable steps to ensure the business for which they are responsible is controlled effectively. The FCA has confirmed this applies equally to AI systems. Delegating to algorithms does not transfer the liability.

Yet the Bank of England’s February 2026 roundtables with regulated firms found that traditional human-in-the-loop validation is increasingly acknowledged as not tenable for complex AI models, and second-line risk functions are approaching agentic AI cautiously, creating bottlenecks without resolving the underlying problem. If you cannot demonstrate supervision at the level of individual AI interactions, the gap is structural.

Giving advice with no retrievable audit trail

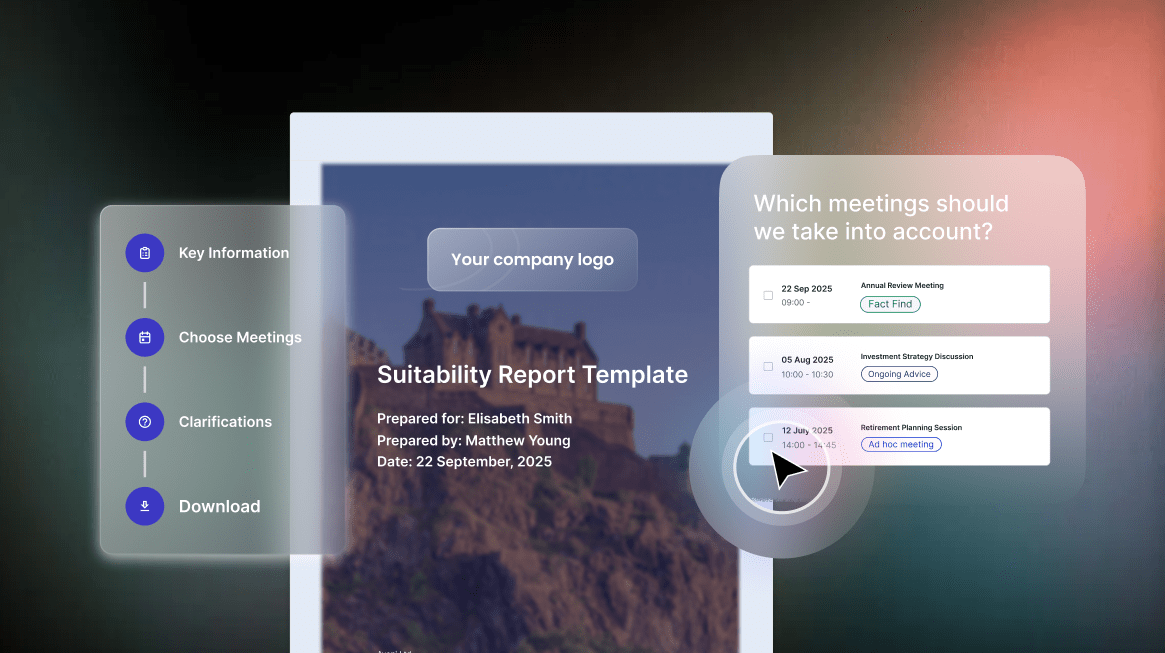

Consumer Duty requires firms to monitor and evidence consumer outcomes on an ongoing basis. COBS 9A requires records of suitability assessments, including what client information was obtained, what was recommended, and why. Where AI is assisting or generating financial advice, every interaction needs a retrievable, structured record.

The FCA’s 2018 Robo-Advice Review found that advisers sometimes intervened in automated processes without recording the nature of that intervention, making it impossible to demonstrate suitability after the fact. Where AI is generating the advice, that problem scales with every interaction the system handles.

Sampling 3% of interactions and calling it oversight

Industry analysis confirms that 1 to 3% is the typical manual sampling rate in financial services quality assurance. For a firm processing thousands of customer interactions daily, that means the vast majority of conversations receive no review at all. Consumer Duty’s outcomes monitoring requirements impose an obligation to monitor and evidence what is actually happening to customers, not to review a sample and extrapolate.

The FCA’s June 2024 multi-firm review of insurance firms found monitoring approaches were “overly focused on processes being completed rather than on the outcomes delivered,” with few firms able to show that monitoring had directly led to action. Sampling was not the problem the FCA identified. Treating sampling as sufficient oversight was.

Allowing poor guidance quality to scale unchecked

When AI gives poor guidance, it does so at a scale no human workforce could replicate. Consumer Duty’s product and service outcome standards apply to every interaction. Vulnerable customer obligations intensify that further, requiring firms to actively monitor outcomes for customers with characteristics of vulnerability, not to assume consistency from periodic spot checks.

The FCA’s own May 2025 research into large language models found that checking whether AI outputs are accurate and appropriate requires both human judgment and automated tools working together. An AI system left to run without that oversight does not stay consistent. Its errors compound quietly across thousands of conversations before anyone notices.

Using AI that cannot explain itself or show where it came from

The PRA’s model risk rules, in effect since May 2024, require that any AI model used in a regulated firm must be documented thoroughly enough for an independent reviewer to understand how it works and check its outputs. That obligation applies to bought-in models as well as ones built in-house. A general-purpose AI model, trained on general internet data, with no documentation specific to financial services use cases, cannot meet that standard.

For UK firms with EU exposure, the EU AI Act adds further requirements: AI systems used in credit scoring or insurance pricing must have full technical documentation and automatic logs of every decision they make. The deadline for compliance is August 2026. A model with no financial services provenance, no documented methodology, and no audit logging cannot be made compliant by wrapping it in a policy document.

Sector-specific considerations

The accountability challenge plays out differently depending on the sector. The regulatory hooks are consistent across financial services, but the specific products, customer populations, and risk concentrations vary significantly.

Wealth and advice

Suitability obligations and Consumer Duty’s outcomes monitoring make this the sector where audit trail infrastructure matters most. The FCA’s February 2025 review of ongoing financial advice services, covering the 22 largest advice firms, found suitability reviews were delivered in approximately 83% of cases. That means a meaningful minority are still not being evidenced consistently, before AI enters the picture. The new targeted support regime, effective April 2026, introduces additional AI governance considerations for firms using AI to deliver personalised nudges and recommendations.

Banking

Customer interactions at scale, complex SMCR liability chains, and the Bank of England’s increasingly sharp expectations under SS1/23 make banking a sector where governance infrastructure needs to perform at volume. Lloyds Banking Group has publicly targeted over £100 million in AI value in 2026. The governance question is how firms with that level of AI ambition demonstrate oversight at a matching scale.

Insurance

Insurance sits alongside international banks at the top of UK financial services AI adoption rates, according to evidence submitted to the Treasury Committee. The FCA’s June 2024 multi-firm review of insurance outcomes monitoring found wide variation in quality across the sector. Vulnerable customer handling, claims processing, and guidance failures going undetected under sampling-based QA are the specific governance challenges that high adoption rates are intensifying.

Mortgages

The FCA’s Mortgage Rule Review explicitly references AI as a tool for helping brokers give faster and better advice, while retaining the human final decision. The governance requirement follows from that framing. Advice consistency at volume, and the specific suitability record-keeping obligations for mortgage recommendations, need oversight infrastructure that scales with the technology being deployed.

Pensions

The impact of AI-influenced decisions in pensions may not surface for years or decades. That makes real-time monitoring of guidance quality and consistency more important here than in almost any other context, not less. The Pensions Dashboards programme goes live in October 2026. The targeted support regime creates a new category of AI-relevant regulated activity from April 2026. The governance questions for this sector are not approaching slowly.

Where does your firm stand?

There are five governance gaps described on this page. Each one maps to a question a regulator, a senior manager, or your own board could reasonably ask today.

The AI Defence Scorecard turns those five gaps into five direct questions. If your firm can answer yes to all of them, your defence is strong. If not, you know where the gaps are and where to focus first.