Part of the AI on Trial: The Burden of Proof campaign series

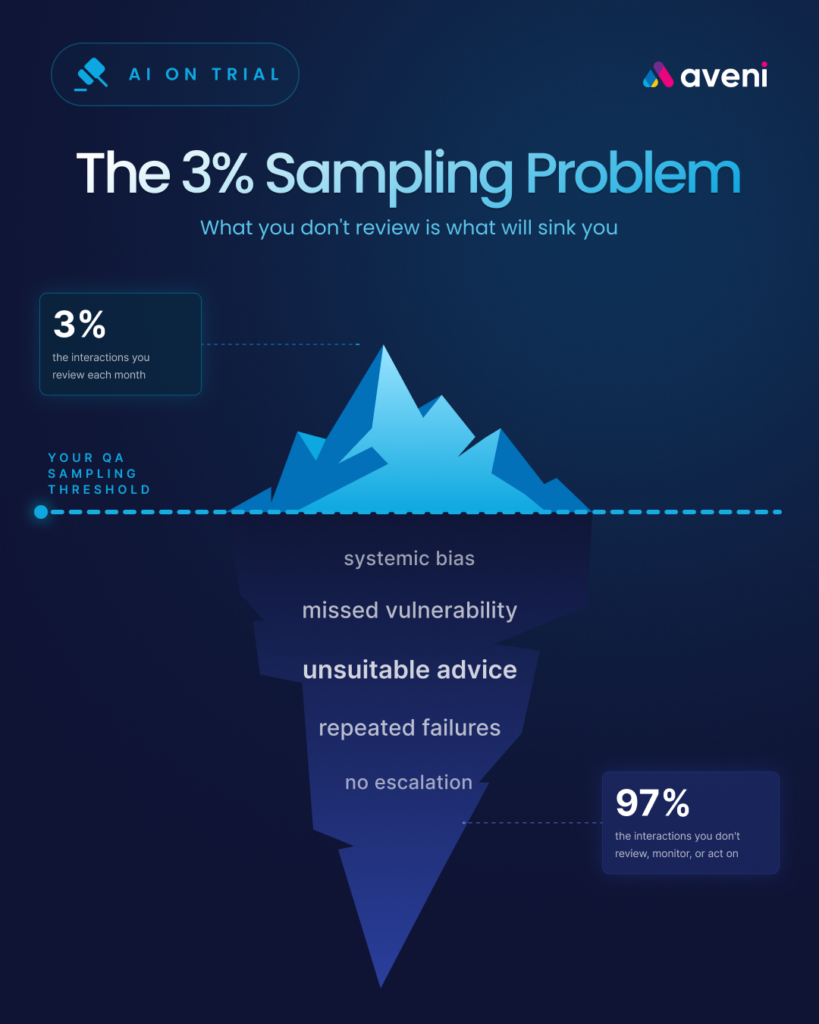

Count III: Sampling 3% of interactions and calling it oversight

A firm deployed AI across its adviser network and customer service operations. When asked how it monitors outcomes, the firm pointed to its QA programme: a team of assessors reviewing a selection of calls each month. The sample covered 3% of total interactions. The remaining 97% were never reviewed. The firm’s board report said no significant harm had been identified. But reporting no harm is not the same as proving none occurred. It means the reporting fell short. The absence of evidence is not the evidence of absence. The firm called it compliance. The FCA would call it a gap.

What 3% sampling actually means for a firm with 100,000 customer interactions

Picture a mid-sized financial advice firm that handles 100,000 customer interactions a year. That number sounds large, but for many firms it sits on the conservative end.

A 3% QA sample means the compliance team reviews 3,000 of those interactions. That seems like meaningful coverage until you flip the number around. The other 97,000 conversations never got examined. Nobody listened to them. Nobody read the transcripts. Nobody checked whether the customer understood the product, whether the adviser identified vulnerability, or whether the recommendation was suitable.

When a conduct risk issue appears in one of those 97,000 interactions, the firm has nothing to show for it. No record of it being identified. No audit trail showing what happened next. From a compliance perspective, the interaction may as well have never taken place.

QA Managers tend to land on the same uncomfortable question once they sit with these numbers. What if the issues are not evenly distributed? What if the interactions most likely to go wrong are also the ones least likely to appear in a random sample?

Vulnerable customer conversations, complex product discussions, and interactions where AI-assisted recommendations diverge from standard guidance all sit in the harder-to-reach part of the population. Random selection misses them more often than it catches them. They also produce more poor outcomes than the rest of the caseload, and they sit at the top of the FCA’s list of concerns.

As AI agents begin providing guidance directly to customers, this risk scales further. A human adviser handles a finite caseload. An AI agent can dispense guidance around the clock, across thousands of conversations a day. When that guidance contains an error, the error does not stay in one adviser’s caseload. It spreads across the entire customer base, continuously, until someone catches it. A 3% sample of a 24/7 AI agent’s output is even less likely to catch a systemic problem than a 3% sample of a human team’s work.

For a full breakdown of all five governance gaps firms face when deploying AI, see the complete framework → AI Governance in UK Financial Services: The Accountability Framework

What Consumer Duty expects firms to evidence about customer outcomes

Consumer Duty sets a clear standard. Firms have to act to deliver good outcomes for retail customers, and they have to evidence that they are doing so. The four outcomes cover products and services, price and value, consumer understanding, and consumer support. The cross-cutting rules require firms to act in good faith, avoid causing foreseeable harm, and help customers pursue their financial objectives.

The word that carries the most weight in that framework is “evidence.” The FCA does not accept assertions. It expects data. Specific data, tied to specific outcomes, showing what happened across the customer base and what the firm did when things fell short.

The FCA examined 180 firms in its review of the first annual Consumer Duty board reports. It found boards approving reports without documented evidence of challenge. Summary statistics built on incomplete monitoring failed the standard. The regulator made the point plainly: completing a process is one thing, evidencing the outcome is another.

For firms relying on sample-based QA, this creates a structural problem. When the FCA asks whether good outcomes are being delivered consistently across all customers, a sampling-based firm has only one honest answer to give: we do not know. We know about the ones we checked.

The FCA expects monitoring to match the firm’s scale and the risk profile of its activities. Firms deploying AI into advice and customer interactions face a higher risk profile because a single AI error can spread through thousands of conversations before anyone notices. Proportionate monitoring at that scale means covering the full volume of AI-assisted interactions, since the harm spreads faster than human review can catch up.

→ Explore how Consumer Duty technology supports the 2026 outcomes roadmap for banks and wealth firms

Three reasons sampling fails under Consumer Duty

Sampling has been the foundation of QA in financial services for decades. It worked reasonably well when most interactions happened between a human adviser and a human customer, when the volume stayed manageable, and when regulators cared more about process compliance than outcome evidence.

Consumer Duty has changed all three of those conditions. Three problems now follow from that shift.

The coverage problem comes first. Random sampling cannot reliably surface the issues that cluster in specific adviser behaviours, product areas, or customer segments. Aveni’s analysis of firms moving from sampling to full coverage consistently surfaces issues in the previously unreviewed interactions that the sample missed entirely. The issues were there. The sample was not looking in the right places.

The timing problem comes next. Sample-based reviews look backwards. A QA assessor listens to a call from last month, identifies a problem, and flags it. By the time the flag goes up, the adviser may have had dozens more conversations carrying the same issue. The customer who received poor advice has already acted on it. The harm has already happened. The FCA expects firms to monitor outcomes in a timely way so that emerging issues get addressed quickly. Reviewing calls weeks or months after they happened falls short of that expectation.

The consistency problem closes the picture. Two QA assessors reviewing the same interaction will often score it differently. Manual assessment is subjective by nature. The criteria may be defined, but their application varies between individuals, between teams, and between review sessions. That inconsistency makes it harder to draw reliable conclusions from the small number of interactions that get reviewed. When assessors disagree on what counts as a good outcome in the 3% they review, the evidence base becomes unreliable before it ever reaches the board.→ Read why manual sampling creates regulatory risk under Consumer Duty

How systemic risk hides in the interactions nobody reviews

The deepest problem with sampling has nothing to do with statistics. Sampling was designed for a world where most failures show up randomly and spread out evenly across the population. Consumer Duty failures behave differently. They cluster.

A single adviser with a poor understanding of a product will give the same unsuitable recommendation to every client they see with a similar profile. That kind of failure does not look random. It repeats across dozens or hundreds of interactions. A random sample rarely picks up enough of those interactions to identify the pattern. Full coverage catches it the moment it shows up.

AI-assisted advice adds another layer to the problem. When an AI tool generates a flawed recommendation, it does not make the error once. It makes the same error every time the same conditions arise. When the AI misinterprets a vulnerability indicator, misapplies a suitability threshold, or hallucinates a product feature, the error gets replicated across every client interaction where the same trigger appears.

The Stanford AI Index Report 2026 shows these risks getting worse. The AI Incident Database recorded 362 documented incidents in 2025, up from 233 in 2024, a 55% increase in a single year. Among organisations that reported AI incidents, the share experiencing three to five repeat incidents climbed from 30% in 2024 to 50% in 2025. These are repeat patterns rather than one-off failures.

Confidence in handling those incidents has gone the other way. Organisations rating their incident response as “excellent” dropped from 28% to 18%. Those rating themselves “good” fell from 39% to 24%.

Rising incident rates, falling response confidence, and a monitoring approach that examines only a fraction of interactions add up to a system designed to miss problems while they multiply.

The hallucination data underscores why AI-assisted interactions need particular attention. The AA-Omniscience benchmark, published in the 2026 AI Index Report, tested 26 leading AI models on factual accuracy. Hallucination rates ranged from 22% to 94%. Even the best-performing models get things wrong in roughly one in five answers. When those models help generate financial advice, a 3% sample cannot reliably identify which interactions carried errors and which did not.

A sample catches random failures. Systemic failures need complete coverage to surface at all. And the value of complete coverage goes beyond catching problems. When every interaction is reviewed, the firm can surface trends across its entire adviser population: which products generate the most confusion, which customer segments receive inconsistent guidance, where training gaps are widest. Those patterns turn into actionable insights that improve advice quality across the business, not a compliance exercise that ticks a box.

Why inaccuracy has become the top AI risk for organisations

The data on how organisations perceive AI risk tells an interesting story. The McKinsey and AI Index joint survey from 2025 found that 74% of respondents now consider inaccuracy a relevant AI risk, up from 60% the year before. That move represents a 14-percentage-point increase in twelve months. Inaccuracy has overtaken cybersecurity, which sits at 72%, as the single most-cited concern.

Among firms actively mitigating risks, 71% report taking steps to address inaccuracy. That figure keeps growing.

The numbers reveal a contradiction at the heart of how many firms operate. Firms acknowledge that AI output quality represents their biggest risk. They invest in mitigation. Their monitoring infrastructure still examines only a fraction of the outputs their AI produces. Risk identification has run ahead of the tooling that should support it.

Compliance Officers reading those numbers tend to land on the same direct question. The firm has identified inaccuracy as the primary risk of its AI deployment. How much of the AI’s output is the firm actually checking? When the answer comes back at 3%, the risk assessment and the monitoring approach have stopped lining up.

The same survey found that 62% of organisations cite security and risk concerns as the primary obstacle to scaling agentic AI. Firms trying to move fastest with AI also tend to be the ones most aware that their governance infrastructure has not kept up. The monitoring gap acts as a bottleneck, and firms know it.

What 100% monitoring looks like in practice

Moving from sampling to full coverage does not mean hiring 50 additional QA assessors. It means changing what monitoring does in the first place.

Sampling makes monitoring a manual process. A human reviews a small number of interactions against a checklist. The output comes back as a score and sometimes a set of notes. The process moves slowly, costs a lot, and stops at however many calls a person can listen to in a day.

Full-coverage monitoring works differently. Every interaction gets assessed automatically against defined criteria. The technology reads or listens to the interaction, evaluates it against the firm’s compliance framework, and flags anything that falls outside acceptable parameters. The human reviewer stops listening to random calls and starts investigating the ones the system has flagged as high risk.

That shift changes what becomes visible. Patterns that stayed invisible in a 3% sample show up clearly at 100% coverage. An adviser who consistently fails to check customer understanding appears as a trend rather than a single data point. A product that generates confusion across multiple customer segments surfaces within days rather than months. A vulnerability indicator missed in a call six weeks ago gets caught in real time.

The evidence base changes alongside it. The firm produces evidence covering every customer conversation rather than a QA report built on 3,000 reviewed interactions. That evidence comes structured, searchable, and tied to specific Consumer Duty outcomes. When the board asks for evidence of good outcomes, the firm points to data covering the entire customer base. When the FCA asks the same question, the answer holds up.

The speed of response shifts too. Sampling lets a systemic issue take weeks or months to surface. Full coverage flags the pattern as soon as the system has enough data to identify it. The firm intervenes before the issue spreads to hundreds more customers.

How governance investment is accelerating but tooling gaps persist

Firms understand the monitoring gap exists. Investment has started moving in the right direction, even though most firms remain in the early stages.

The 2025 McKinsey and AI Index survey shows AI-specific governance roles growing 17% in 2025. The share of businesses with no responsible AI policies in place fell sharply, from 24% to 11%. These shifts matter. Firms have started creating accountability structures, hiring governance specialists, and writing policies.

Policies and roles cannot close the monitoring gap on their own. The top barriers to implementing responsible AI sit elsewhere. Knowledge gaps lead the list at 59% of respondents, up from 51% in 2024. Budget constraints follow at 48%, and regulatory uncertainty at 41%.

Firms specifically trying to scale agentic AI face a different barrier profile. Security and risk concerns dominate at 62%, followed by technical limitations at 38% and regulatory uncertainty at 38%. Gaps in responsible AI tooling and control come in at 36%.

That last number connects most directly to monitoring. More than a third of firms trying to scale AI admit they lack the tools to govern it. The policy says “monitor customer outcomes.” The tooling needed to monitor 100,000 interactions a year at full coverage remains the part still being built.

The regulatory timeline adds urgency. The FCA’s Mills Review will report in summer 2026. The EU AI Act high-risk provisions take effect in August 2026. The FCA has signalled that multi-firm reviews, sector-specific scrutiny, and enhanced board reporting requirements all sit on its 2025 to 2026 agenda. Firms that arrive at those reviews still relying on 3% sampling will need a strong explanation for why 97% of their customer interactions go unexamined.

How Aveni Detect monitors every customer interaction

The charge in Count III is sampling 3% of interactions and calling it oversight. The defence is monitoring 100% and producing the evidence to prove it.

Aveni Detect is built to deliver that evidence. It analyses every customer interaction across voice, chat, documents, and digital channels. It assesses each interaction against the firm’s compliance framework automatically, flagging conduct risk, vulnerability indicators, suitability concerns, and Consumer Duty outcome markers.

The shift for the QA team has nothing to do with workload. It changes the focus. The team stops spending assessor time listening to random calls and starts investigating the interactions Aveni Detect has flagged as high risk. Assessor effort goes where it matters most rather than spreading thinly across a random sample.

The firm ends up with structured evidence covering the entire customer base rather than extrapolated estimates from a fraction. Board reports draw on data from every interaction rather than a sample of 3,000. When the FCA asks how the firm monitors customer outcomes, the answer comes back specific: every interaction, assessed against defined criteria, with a documented audit trail.

Think of Aveni Detect as Exhibit C in the trial: circumstantial evidence. In a courtroom, circumstantial evidence builds a case through the accumulation of individual data points that, taken together, reveal a pattern. Aveni Detect provides the equivalent for customer monitoring: patterns of harm, patterns of good practice, and the evidence that links individual interactions to firm-wide outcomes.

Firms that answer Count III successfully share one common move. They stopped treating 3% as sufficient and built the infrastructure to see everything. The evidence only exists when someone looks for it.

Where does your firm stand?

The five governance gaps outlined in the AI on Trial series cover the questions regulators, boards, and senior managers are asking now. The sampling gap determines whether your firm can evidence outcomes across the full customer base or only the fraction it reviewed.

See how Aveni helps firms move from sampling to full coverage →

This article is part of Aveni’s AI on Trial: The Burden of Proof campaign series.

Read the full series:

- AI Governance in UK Financial Services: The Accountability Framework

- Count I: SMCR Compliance and AI Agent Oversight

- Count II: AI Advice Without an Audit Trail

- Count III: Why Sampling 3% Falls Short of Consumer Duty

- Count IV: When AI Guidance Goes Wrong at Scale

Sources cited in this article:

- FCA, Consumer Duty: Principle 12, cross-cutting rules, four outcomes (2023, updated ongoing)

- FCA, Review of Consumer Duty board reports: 180-firm review (2025)

- FCA, Review of the Long-Term Impact of AI on Retail Financial Services (Mills Review, January 2026)

- Stanford HAI, AI Index Report 2026, Chapter 3: Responsible AI

- AI Incident Database (AIID), incident count 2024-2025

- McKinsey & Company / AI Index Responsible AI Survey (2025)

- Artificial Analysis, AA-Omniscience hallucination benchmark (2026)

- EU AI Act implementation timeline, high-risk provisions (August 2026)