Part of the AI on Trial: The Burden of Proof campaign series

Count II: Giving guidance with no retrievable AI audit trail

A firm deployed AI to support its advice process. The AI helped draft suitability letters, summarise client meetings, and surface product options for advisers to review. When asked to produce a complete record of any single interaction, the firm could not. The output was saved. The reasoning was not. The chain from AI input to customer outcome was broken at every link.

Today, AI agents in UK financial services are not giving advice. They are providing guidance, drafting documents, and supporting human advisers who retain accountability for the final recommendation. That line matters. Advice is a regulated activity under the Financial Services and Markets Act. Guidance is not. The current generation of AI tools sits on the guidance side of that line, with a human in the loop for anything that counts as a personal recommendation.

But the audit trail problem exists now, before AI crosses that line. Every AI-generated meeting summary, draft letter, or product suggestion feeds into a final piece of advice. If the firm can’t show what the AI produced, what the adviser changed, and why, the evidence chain behind the advice is incomplete. And regulators are already asking firms to produce that evidence.

In October 2025, the Bank of England’s PRA told lenders their AI model monitoring was “not frequent enough”. In March 2026, Starling CIO Harriet Rees, the government’s financial services AI champion, proposed a standardised independent testing regime, arguing that firms currently rely on internal checks with no shared benchmark. The direction is clear. Firms need to evidence what their AI is doing and how it is being monitored. That obligation applies to guidance today, and with more force to advice tomorrow.

What the FCA expects firms to record when AI assists with financial advice

The rules on record-keeping for financial advice are specific and long-established.

COBS 9A requires firms to produce a suitability report that sets out the advice given and how the recommendation meets the client’s objectives, financial situation, and needs. The report must show what client information was obtained, what was recommended, and why. These records must be retrievable for years after the advice was given.

Consumer Duty adds a layer on top. Under Principle 12, firms must act to deliver good outcomes for retail customers. The cross-cutting rules require firms to act in good faith, avoid causing foreseeable harm, and enable customers to pursue their financial objectives. Firms must monitor whether these outcomes are actually being delivered and produce evidence that they are.

None of this is new. Financial advisers have been producing suitability records for decades. The regulatory expectations are clear and well-understood.

What has changed is who is advising.

When AI assists with the advice process, including drafting suitability reports or summarising client conversations. The FCA has been explicit about this. The suitability record must capture the same information regardless of whether a human adviser or an AI tool produced the recommendation. What the client told the firm, what the firm recommended, and why.

The difference is scale. A human adviser handles a caseload of hundreds of clients. An AI tool can process thousands of interactions per day. Manual record-keeping worked for one. It does not work for the other.

For a full breakdown of all five governance gaps firms face when deploying AI, see the complete framework → AI Governance in UK Financial Services: The Accountability Framework

Why most AI-assisted advice leaves no retrievable record

Here is what typically happens when AI is used in the advice process today.

In the most common scenario, a client has a meeting with an adviser. The firm’s AI tool listens to the conversation or reads the meeting notes. It pulls in the client’s financial data from the CRM. It generates output, maybe a product suggestion, a draft suitability letter, or a set of options for the adviser to review. The adviser looks at the output, makes changes, and presents the final recommendation to the client.

A second scenario is becoming more common. The AI agent converses directly with the customer, without an adviser in the loop. It answers questions, explains products, and provides guidance on financial decisions. The customer interacts with the AI the same way they would interact with a contact centre agent, except the transcript, the reasoning, and the basis for what the AI said often sit across different systems, if they are captured at all. At some point, whether through regulatory change or commercial pressure, the scope of what these agents are permitted to do will expand from guidance toward advice. When it does, firms that have not built an audit trail for today’s guidance interactions will be starting from zero.

At each stage of the process, information is created. In some firms it sits in disconnected systems. The AI tool holds its output, the CRM holds the client data, the meeting notes platform holds the conversation record, and the compliance file holds the final letter. In firms further along the integration curve, APIs, middleware, and case management systems pull these records together into something closer to a single view.

The gap is rarely at the integration layer. It is at the reasoning layer. Most systems capture what the AI produced. Very few capture why. Fewer still record what the AI considered and rejected, what confidence level it attached to its output, what data points it drew on, or what the adviser changed when they reviewed it. Even the most integrated case management system can only surface the records that were captured in the first place. If the AI reasoning was never logged, no amount of integration will reconstruct it.

This is the structured record a regulator will ask for, and it is the one most firms can’t produce. Not because their systems are siloed, but because the layer that matters, the decision chain behind the output, is rarely recorded at all. Three questions sit at the heart of any regulatory inquiry: what happened in this interaction, what did the AI contribute, and can you prove the outcome was suitable? Answering them requires evidence that most current implementations, integrated or not, do not generate.

The FCA flagged this problem years ago. Its 2018 Robo-Advice Review found that advisers sometimes intervened in automated processes without recording the nature of those interventions. When a human adviser overrides or adjusts an AI recommendation, that adjustment needs to be documented. What was the original AI output? What did the adviser change? Why? Without answers to those questions, the firm can’t demonstrate suitability after the fact.

That finding was based on relatively simple automated advice tools. AI-assisted advice in 2026 is far more complex. The models are more capable, the interactions are faster, and the volume is higher. The record-keeping gap has grown with every upgrade.

Think of it as a chain of custody problem. In criminal law, chain of custody means that every person who handled a piece of evidence is documented. If there is a gap anywhere in the chain, the evidence becomes unreliable. The same logic applies to AI-assisted advice. If you can’t trace the path from client data input, through AI recommendation, through adviser review, to customer outcome, the chain is broken. And a broken chain means the evidence of suitability is incomplete.

AI incidents are rising while firms grow less confident in their response

The audit trail gap matters most when something goes wrong. Without retrievable records, firms can’t investigate what happened, how many customers were affected, or whether the issue was a one-off or a pattern.

The Stanford AI Index Report 2026 shows that AI incidents are becoming more common across all industries. The AI Incident Database recorded 362 documented incidents in 2025, up from 233 in 2024. That is a 55% increase in a single year. The OECD’s separate tracker, which casts a wider net using automated news monitoring, recorded 435 incidents in a single month in January 2026.

These are not all financial services incidents. But they illustrate the direction of travel: as AI is deployed into more decision-making contexts, the number of things that go wrong is growing.

More concerning is what happens after an incident occurs. According to the McKinsey and AI Index joint survey (2025), among organisations that reported experiencing AI incidents, the share that experienced three to five incidents rose from 30% in 2024 to 50% in 2025. These are repeat events, not isolated failures.

At the same time, organisations’ confidence in their ability to respond is falling. Those rating their incident response as “excellent” dropped from 28% to 18%. Those rating themselves “good” fell from 39% to 24%. The share describing their response as merely “satisfactory” rose from 19% to 32%.

For a Head of Advice or Compliance Officer reading those numbers, the question is direct: if one of your AI-assisted interactions led to a customer complaint tomorrow, could you reconstruct the full chain of events? Could you identify every customer who received a similar recommendation? Could you do it in hours rather than weeks?

Without a structured audit trail, the answer for most firms is no.

Hallucination rates show why recording every AI output matters

One of the strongest arguments for recording AI outputs is that the AI will sometimes get things wrong, and you need to be able to find out when it did.

AI models produce plausible-sounding text that is factually incorrect. The industry calls this hallucination. In financial advice, a hallucination might mean an AI tool cites a tax threshold that doesn’t exist, recommends a product the client isn’t eligible for, or overstates the projected return on an investment.

The scale of this problem is significant. The AA-Omniscience benchmark, published in the 2026 AI Index Report, tested 26 of the leading AI models on factual accuracy across six domains including law and health. Hallucination rates ranged from 22% at the best-performing end to 94% at the worst. The variation is enormous. Even models from the same provider performed very differently depending on the domain and the type of question.

A separate benchmark (KaBLE) tested whether AI models can distinguish between established facts and statements framed as personal beliefs. When a false statement was presented as something a third party believes, leading models handled it well. When the same false statement was presented as something the user believes, performance collapsed. GPT-4o’s accuracy dropped from 98.2% to 64.4%. DeepSeek R1 fell from over 90% to 14.4%.

This matters for financial advice because client conversations frequently involve beliefs about their financial situation that may or may not be accurate. A client might say they believe their pension is sufficient for retirement. A client might say they believe they qualify for a particular tax relief. If the AI tool treats those beliefs as facts and builds a recommendation on top of them without checking, the advice will be wrong.

The only way to catch these errors after the fact is to record what the AI produced and review it. If the output is not captured in a structured, retrievable format, nobody will know the recommendation was built on a hallucinated data point until the customer complains, or until the FCA asks.

But catching errors after the fact is the weaker half of the answer. The KaBLE finding points to something more important. If an AI model’s accuracy collapses when a user states a false belief with confidence, the model cannot be trusted to self-correct inside a client conversation. A client who firmly believes they qualify for a tax relief they do not qualify for will, on current performance, often get that belief confirmed rather than challenged. The model will then build a recommendation on top of it.

The fix is not only better logging. It is better guardrails. An AI tool used in the advice process needs to be built to treat client statements about their own circumstances as inputs to be verified, not as facts to be accepted. It needs to cross-reference what the client says against the firm’s own authoritative data, flag inconsistencies, and refuse to generate output where the underlying premise is unverified. A model running without those constraints will reproduce whatever the client believes, confidently and plausibly, and the audit trail will only tell you about it after the damage is done.

AI transparency is declining, which means firms must build their own evidence

There is a reasonable question here: should the companies building AI models not be providing this transparency themselves?

The data suggests the opposite is happening. The Foundation Model Transparency Index, tracked by Stanford since 2023, scores AI developers on how much they disclose about how their models are built, trained, and deployed. After improving from an average score of 37 to 58 between 2023 and 2024, the average dropped to 40 in 2025. The companies building the models firms depend on are telling firms less about how those models work, not more.

The weakest areas of disclosure are the ones that matter most for regulated firms: training data, compute resources, and post-deployment impact. The average score for data properties across all assessed models was 15 out of 100.

For any Head of Advice whose firm is deploying AI tools built on third-party models, this creates a specific problem. You can’t rely on the model provider to give you the documentation the FCA expects. The transparency is not there. The audit trail must be built at the point of interaction, inside your own systems, capturing what the AI actually did in each customer conversation.

This is one of the reasons domain-specific models built for financial services carry a governance advantage. A model designed for a regulated environment can be documented, audited, and explained in ways a general-purpose frontier model cannot.

It is also the reason Aveni’s model suite is structured the way it is. Aveni runs a combination of fine-tuned frontier models and FinLLM, our own domain-specific large language model built for UK financial services. FinLLM was trained on financial services data, aligned with UK regulatory context, and designed to outperform general-purpose models on the accuracy and speed benchmarks that matter for advice and compliance use cases. Because we built it, we can tell firms exactly what it was trained on, how it was evaluated, and how it behaves in production. That level of transparency is not something firms can get from a general-purpose model, and it is what makes a defensible audit trail possible.

What a complete audit trail for AI-assisted advice actually looks like

A retrievable audit trail is not a system that logs outputs. Logging what the AI said is the easy part. The hard part is capturing the full decision chain so that a regulator, a compliance officer, or a customer can reconstruct what happened months or years after the event.

There are five stages in a complete chain of custody for AI-assisted advice.

Stage 1: Input captured. What client data did the AI receive? What was the client’s question or objective? What financial information was used as the basis for the recommendation? This maps to the Consumer Duty cross-cutting requirement to act on accurate, complete information.

Stage 2: AI reasoning logged. What recommendation did the AI generate? What factors drove that recommendation? What alternatives did it consider and reject? If the model flagged uncertainty or low confidence, was that captured? This is the stage most firms miss entirely. They capture the output but not the reasoning that produced it.

Stage 3: Adviser review recorded. Did the adviser accept the AI recommendation as-is, modify it, or override it completely? What did they change and why? This is the gap the FCA’s 2018 Robo-Advice Review identified, and it has grown wider with more capable AI tools. If the adviser changed the recommendation, the original AI output and the adviser’s amended version both need to be preserved.

Stage 4: Customer interaction documented. What was communicated to the customer? Was it made clear that AI assisted with the recommendation? Did the customer understand the basis for the advice? This maps directly to the Consumer Duty consumer understanding outcome.

Stage 5: Outcome linked. Can the firm connect this specific interaction to the customer’s actual outcome? Can the full chain be retrieved months or years later? This maps to Consumer Duty’s ongoing monitoring obligation. The suitability of advice is not fixed at the point of delivery. It must be tracked over time, and the evidence must be retrievable for as long as the regulatory obligation lasts.

Each stage produces a piece of evidence. Together, they form the chain of custody. Break any single link and the evidence is incomplete.

This five-stage framework mirrors the direction regulators are already moving in at the model level. The Bank of England’s PRA flagged in October 2025 that banks’ monitoring of AI models was not frequent enough. In March 2026, Starling’s CIO Harriet Rees submitted a proposal to the government for a standardised, independent testing regime for AI models used in banking, arguing that firms currently rely on their own internal checks without any shared benchmark. The government’s initial response was that the AI Security Institute would not expand its remit to cover routine commercial AI in banking, but the underlying pressure is not going away. Regulators want evidence that AI in financial services is being properly tested, monitored, and governed.

The chain of custody for AI-assisted advice is the interaction-level equivalent. Where the PRA is asking how firms evaluate the models they deploy, Consumer Duty asks how firms evidence the outcomes those models produce for individual customers. The two lines of scrutiny are converging. A firm that can produce model-level documentation but no interaction-level audit trail will answer half the question. A firm that can produce both will answer the whole one.

Firms are starting to invest in AI governance, but the infrastructure gap remains

The good news is that firms know this is a problem and investment is moving in the right direction. The bad news is that most firms are still in the early stages of building the infrastructure to solve it.

According to the 2025 McKinsey and AI Index survey, AI-specific governance roles grew 17% in 2025. The share of businesses with no responsible AI policies in place fell from 24% to 11%. Firms are hiring people, creating functions, and writing policies. The direction of travel is clear.

But the obstacles are also clear. Knowledge gaps remain the top barrier to implementing responsible AI, cited by 59% of respondents (up from 51% in 2024). Budget constraints were cited by 48%. Regulatory uncertainty by 41%. Among firms specifically trying to scale agentic AI, 62% cited security and risk concerns as the primary barrier.

The pattern is consistent: firms understand the risk, they’re building the structures to address it, but they don’t yet have the tools to close the gap between policy and practice. A responsible AI policy that says “we will maintain audit trails for AI-assisted advice” is a good start. A system that actually captures those audit trails at every stage of every interaction is the part that matters. Most firms have the first. Very few have the second.

The regulatory timeline adds urgency. The Mills Review will report to the FCA Board in summer 2026, with comprehensive guidance on how Consumer Duty and SMCR apply to AI expected by year-end. The EU AI Act’s high-risk provisions take effect in August 2026. The new targeted support regime, relevant to firms using AI for personalised nudges and recommendations, came into force in April 2026. The window for building governance infrastructure before the guidance arrives is closing.

How Aveni Assist builds a retrievable record for every AI-assisted interaction

The charge in Count II is giving advice with no retrievable audit trail. The defence is building a system that captures the full chain of custody for every AI-assisted interaction, automatically, at scale, without relying on advisers to create manual records after the fact.

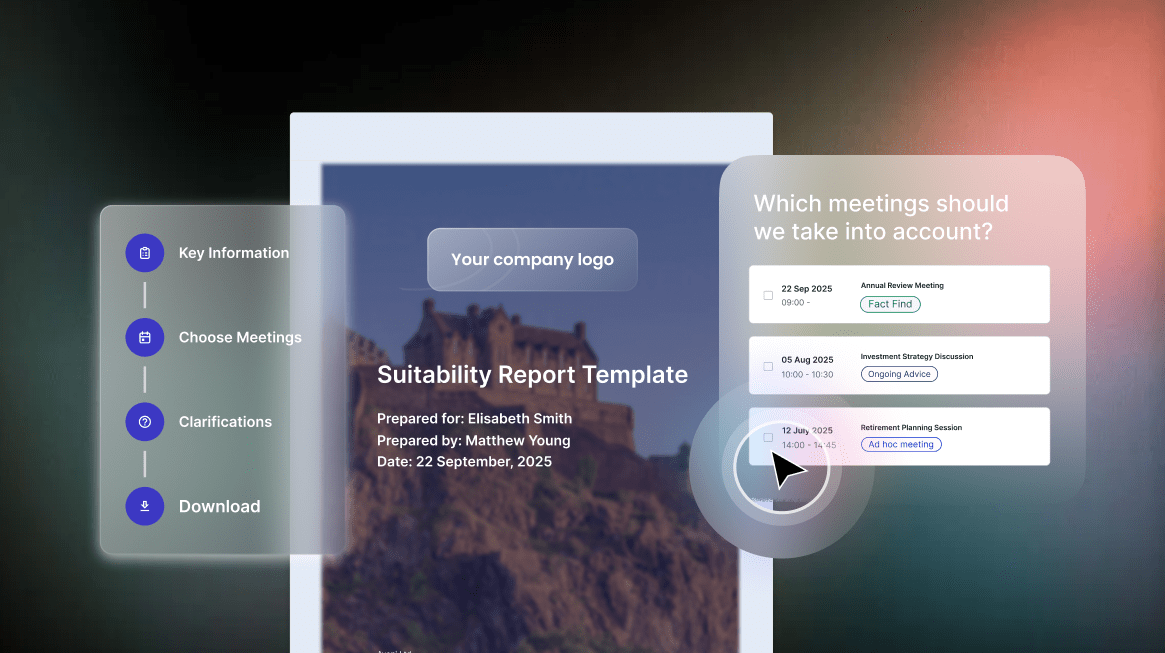

This is what Aveni Assist is built to do.

Aveni Assist sits inside the adviser workflow and captures the full interaction lifecycle. When an adviser has a client meeting, Aveni Assist records the conversation, extracts the key financial information, and generates a structured meeting summary. It logs what the AI produced, what the adviser reviewed, and what changes were accepted or rejected. Evidence links tie each output back to the exact part of the transcript it was drawn from, so a reviewer can verify the source of any recommendation or fact-find entry. The full record is searchable and traceable across the advice value chain, and can be pushed into the firm’s CRM for long-term retention.

Against the five-stage chain of custody:

Input captured: Recordings are ingested from Teams, Zoom, Google Meet, Webex, mobile, browser, or SFTP, alongside CRM client data, letter of authority responses, and adviser intent. The full transcript and structured fact-find data form the AI’s input record.

AI output logged: Every AI-generated output, including summaries, fact-find entries, draft emails, and draft suitability reports, is stored against the meeting. Aveni Assist produces a traceability report on demand that links each section of a generated document back to the specific transcript evidence that produced it.

Adviser review recorded: Where the adviser uses Navi to refine AI output, the system generates proposed changes rather than overwriting content directly. The adviser chooses whether to accept or reject each proposal, and the record of that review is preserved. The overall workflow is built around human-in-the-loop editing, with the adviser retaining control of every output.

Customer interaction documented: Final compliance documents, including suitability reports and file notes, are generated from meeting data and templates, stored in S3, and written back to the firm’s CRM via integrations with Intelliflo, Xplan, and other systems.

Record retrievable over time: Records are stored against configurable tenant-level retention policies designed to accommodate FCA retention obligations. The full chain, from meeting recording through to final document, can be retrieved via the Assist web application or the public REST API for compliance review, complaint investigation, or regulatory inquiry.

The firms that will answer Count II successfully are the ones that can produce this evidence on demand. Not firms that avoided using AI in advice. Firms that used it with the infrastructure to prove it worked properly.

Where does your firm stand?

The five governance gaps outlined in the AI on Trial series map directly to the questions regulators, senior managers, and boards are already asking. The audit trail gap is the one that connects most directly to everyday advice operations. If your AI helped generate a recommendation today, could you retrieve the full record tomorrow?

This article is part of Aveni’s AI on Trial: The Burden of Proof campaign series.

Read the full series: