Part of the AI on Trial: The Burden of Proof campaign series

Count IV: Allowing poor guidance quality to scale unchecked

A firm deployed AI to support its advisers across thousands of customer interactions per month. The AI helped generate recommendations, draft meeting summaries, and coach advisers through complex product discussions. When the AI got something wrong, it got it wrong every time the same conditions appeared. The same hallucinated product feature. The same missed vulnerability indicator. The same unsuitable recommendation. One error, replicated at a speed and scale no human adviser could match. Nobody noticed until the complaints arrived.

The problem with scale is that it cuts both ways

Financial services spent a decade building the case for AI. Faster decisions. Better consistency. More advisers serving more clients. The pitch was always about volume, and the industry bought it.

Here is the part that did not make the brochure. When AI gets something right, it gets it right at scale. When it gets something wrong, it gets it wrong at the same scale. The volume does not discriminate between the two. Neither does the FCA.

Most firms are watching the upside. The downside is where the regulatory exposure sits — and for vulnerable customers in particular, the downside of unchecked AI guidance quality is not theoretical.

For a full breakdown of all five governance gaps firms face when deploying AI, see the complete framework → AI Governance in UK Financial Services: The Accountability Framework

One adviser gives bad advice to ten clients. AI gives bad advice to ten thousand

A human adviser who misunderstands a product makes a contained mess. They recommend the wrong thing to their caseload. A complaint surfaces. A QA review flags it. The firm retrains the adviser. Someone calls the affected clients. It is a problem with edges.

AI does not have edges.

When an AI tool hallucinates a product feature, misapplies a suitability threshold, or skips past a vulnerability cue, it does not make the error once. It makes the same error every time the same conditions arise. Every customer asking about pension contribution limits hears the wrong number. Every recently bereaved customer slides through the conversation without the additional support they should be getting. The error replicates at the speed of inference.

Two of the four Consumer Duty outcomes turn directly on this. Products and services have to meet the needs of the target market. Communications have to land in a way customers can act on. Both outcomes apply at the interaction level. Every conversation counts, and the AI runs thousands of them a day.

For a Head of Advice, this carries a kind of risk that did not exist five years ago. The quality of guidance the firm delivers now depends partly on software processing thousands of interactions a shift. When the quality drops, it drops everywhere at once. There is no localised version of an AI failure.

→ Read why audit-ready evidence under Consumer Duty starts with how firms monitor AI outputs

What Consumer Duty expects and why AI makes it harder to deliver

Two of the four Consumer Duty outcomes bear directly on guidance quality.

The products and services outcome requires firms to design and distribute products that meet the needs of customers in their target market. When AI assists with product recommendations, the AI joins the distribution chain. When it recommends a product to a customer outside the target market, or describes product features inaccurately, the firm has failed to meet the outcome for that customer.

The consumer understanding outcome requires firms to communicate in a way that helps customers make informed decisions. The FCA expects firms to test whether their communications actually land, not whether they left the building. When AI generates explanations, summaries, or recommendations, the clarity and accuracy of those outputs decide whether the customer can act on what they have been told.

The cross-cutting rules close the loop. Firms have to act in good faith, avoid foreseeable harm, and help customers pursue their financial objectives. When a firm knows its AI produces incorrect outputs at a measurable rate — and as the data shows, every current model does — deploying that tool without monitoring creates foreseeable harm. The firm knew the risk existed and did not take reasonable steps to manage it. That is the regulatory exposure in plain language.

The FCA’s review of 180 Consumer Duty board reports found firms approving documents without documented evidence of challenge. Summary statistics based on incomplete monitoring did not satisfy the standard. For firms using AI in guidance, the implication runs straight to the boardroom. The board needs to know what the AI is saying to customers, how often it gets things wrong, and what the firm does about it.

Read how Tier 1 banks should rebuild the Three Lines of Defence for AI agents →

Hallucination is the polite word. The numbers are less polite.

The industry landed on “hallucination” as the term for when a model confidently says something untrue. It sounds soft. The data is harder.

Stanford’s 2026 AI Index Report tested 26 leading AI models on the AA-Omniscience benchmark, a 6,000-question evaluation across law, health, and four other domains. Hallucination rates ranged from 22% to 94%. The best-performing model still got things wrong roughly one in five times. Most of the others did considerably worse.

A second benchmark, KaBLE, asked something more pointed. Could the models tell apart what is factually true from what someone merely believes to be true? GPT-4o scored 98.2% on factual statements. When researchers reframed the same false statements as something the user believed, accuracy dropped to 64.4%. DeepSeek R1 went from over 90% to 14.4%.

This is the part that should give Heads of Advice pause. Financial advice conversations run on belief. A customer says they think they qualify for a tax relief. A customer says they believe their pension is on track. A customer says they reckon a product matches their risk profile. When the AI accepts those beliefs as facts and builds a recommendation on top of them, the output breaks at the foundation. Because the AI runs that conversation thousands of times, the broken foundation reproduces across the whole customer base.

Catching this requires watching what the AI says as it says it. Reviewing the transcript three weeks later documents the harm. It does not stop it.

The AI that always agrees with the customer

The 2026 AI Index Report surfaced a finding that should land hard for advice firms.

Researchers ran a benchmark called INTIMA, which tested how models behaved when users pushed them in directions they should resist. Across tests on several leading models, companionship-reinforcing behaviours showed up more often than boundary-maintaining ones. The models leaned toward agreement. They softened objections. They accommodated user preferences even when the preferences conflicted with what was accurate.

In financial services, that tendency has a name. It is suitability failure.

A customer pushes for a riskier product than their profile supports. A customer states financial circumstances that conflict with their stated goals. A customer disputes a recommendation because they want a different answer. A model with a built-in lean toward agreement will tell them what they want to hear. Consumer Duty does not accept that as a good outcome. The cross-cutting rule on foreseeable harm does not either.

This kind of failure does not show up in standard accuracy benchmarks. It accumulates across thousands of conversations, where individual interactions look fine and the aggregate trend points toward customers agreeing themselves into outcomes that do not serve them. It is the AI equivalent of an adviser who never pushes back, and regulators have historically had a lot to say about those.

How AI guidance failures hit vulnerable customers hardest

The FCA defines vulnerability broadly for a reason. FG21/1 names four drivers: health conditions affecting how someone manages their affairs, life events like bereavement or job loss, low resilience to financial or emotional shocks, and limited capability around financial literacy or digital skills. These are not edge cases. The FCA’s own data suggests around half of UK adults show characteristics of one or more.

Each driver makes the customer less likely to challenge bad advice when they receive it. A bereaved customer may not have the bandwidth to question a recommendation. A customer with low financial literacy may not spot that a product does not match their risk profile. A customer in financial distress may accept a recommendation that a more resilient customer would reject.

Poor AI guidance lands hardest on vulnerable customers precisely because they are least equipped to push back. A human adviser can read the room, slow down, check comprehension, adapt. AI that treats every interaction identically does none of those things. When the model misses a vulnerability indicator in one conversation, it misses the same indicator in every conversation where the same conditions appear. Consumer Duty requires firms to actively monitor outcomes for vulnerable customers across all four outcomes. AI that delivers consistency in name only fails that requirement at scale.

Research from More2life found that only 12% of advisers say they find vulnerable customers easy to identify. Trained humans working face to face struggle. AI built on general internet data will read the signals worse. A customer who mentions a recent diagnosis in passing. A voice that tightens when financial stress comes up. A question repeated three times because the answer did not register. The interactions that carry the most vulnerability risk are the ones most likely to produce unexpected model behaviour. That is the combination the FCA is watching for.

The FCA’s FG21/1 guidance is clear that firms cannot treat vulnerable customer obligations as a one-off exercise. They require ongoing monitoring, consistent identification processes, and evidence that outcomes for vulnerable customers are at least as good as for the rest of the customer base. In a firm running thousands of AI-assisted interactions a day, the only way to produce that evidence is through infrastructure that monitors at the same scale the AI operates.

Good in testing. Less good in practice.

There is a comfortable assumption that a model that passes safety benchmarks will hold up in production. The 2026 AI Index Report tested that assumption.

MLCommons’ AILuminate benchmark tested AI models under two conditions. Under normal use, several leading models picked up “Very Good” or “Good” safety ratings. When researchers introduced adversarial prompts designed to bypass the safety measures, every model’s score dropped. Some fell a full tier or more.

Financial advice is not adversarial in that sense. It is, however, complex, emotionally charged, and full of edge cases the testing environment did not anticipate. A divorce in progress. A complex estate after a recent bereavement. A client whose capacity to understand is declining. The conversations where guidance quality matters most are the ones likeliest to produce model behaviour nobody trained for.

The same report found that improving one dimension of responsible AI typically degrades another. Tune for safety, lose some accuracy. Tune for privacy, take a hit on fairness. No single intervention improves all dimensions at once. For financial services firms, a model carefully tuned for safety may produce less accurate advice as a side effect of that tuning. The trade-off needs ongoing monitoring, not a sign-off at procurement.

The transparency picture makes this harder. The Foundation Model Transparency Index climbed from 37 to 58 between 2023 and 2024, then dropped back to 40 in 2025. The companies building the models are disclosing less about how they work. When a firm cannot see inside the model its AI relies on, the firm needs its own monitoring layer. There is no other route to catching failures as they happen.

Four things to monitor before the FCA asks to see the data

Four signals surface early when AI guidance quality starts drifting. Firms with the right infrastructure can watch all four in real time.

Customer confusion rising. Customers asking the same question twice. Customers misreading a product feature they have just been told about. Customers expressing uncertainty about what they should do next. When confusion clusters around a particular product or segment, the AI’s guidance on that topic needs reviewing.

Advisers quietly rewriting AI outputs. When advisers consistently override or substantially modify AI-generated recommendations, something has gone wrong upstream. When advisers rewrite 40% of AI-generated suitability letters before the customer sees them, the AI is not producing suitable guidance. The gap between what the AI suggests and what the adviser sends is the firm’s real quality indicator, and right now most firms are not measuring it.

Complaints clustering. Complaints are always lagging. When they concentrate around a specific product, a specific customer segment, or a specific type of interaction, they point to a systemic guidance issue rather than an individual adviser problem. AI’s caseload is the entire firm. Systemic failure at that scale has a systemic source.

Vulnerable customers receiving identical treatment to everyone else. When monitoring shows that AI-assisted interactions with vulnerable customers produce the same outcomes as interactions with the broader customer base, the AI is missing the signals it should be reading. Consumer Duty requires appropriate support for vulnerable customers at the interaction level. That means different responses, not just different policies on paper. A model that treats everyone identically fails that test before the conversation ends.

The tooling is behind the risk

The 2025 McKinsey and AI Index responsible AI survey put a number on what the industry has been saying off the record. 36% of organisations cite gaps in responsible AI tooling and control as a primary obstacle to scaling agentic AI. More than a third of firms scaling AI admit they cannot govern it properly.

The same survey found 74% of organisations now rate inaccuracy as a relevant AI risk, up from 60% the year before. Inaccuracy has overtaken cybersecurity as the single most-cited concern. Among firms actively mitigating risks, 71% report taking steps to address inaccuracy specifically.

Risk awareness has outrun the tooling. Firms have hired the people, written the policies, and drafted the board papers. The infrastructure that catches a guidance quality problem during the interaction, before the customer hears it, is the part most firms are still building. The FCA is not waiting for that gap to close before it starts asking questions.

Why catching it during the conversation changes everything

The traditional model for managing advice quality runs backwards. An adviser gives guidance. An assessor reviews it weeks later. A problem surfaces. The adviser hears about it. By then, the adviser has had thirty more conversations carrying the same issue.

Real-time coaching reverses the sequence. The system watches AI outputs as they generate, flags drift before it reaches the customer, and prompts the adviser to course-correct mid-conversation. The system catches a recommendation slipping outside suitability parameters. It surfaces a vulnerability cue the AI missed. It stops an inaccurate product description before the customer makes a decision based on it.

This is the difference between investigating what went wrong and stopping it from going wrong. The campaign’s “Scene of the Crime” framing captures that gap directly. Reviewing what happened after the customer acted on bad advice is detective work. Intervening during the interaction is prevention.

Real-time coaching also changes what compliance teams can report. Rather than telling the board how many interactions the team reviewed, the firm reports how many interventions the system made, what they were, and what the correction looked like. That is the active evidence the FCA has been signalling it wants. Evidence that governance is working in practice, not evidence that the firm ran a process.

How Aveni’s roadmap addresses real-time AI guidance quality

The charge in Count IV is allowing poor guidance quality to scale unchecked. The defence requires catching quality problems during the interaction, before they reach the customer.

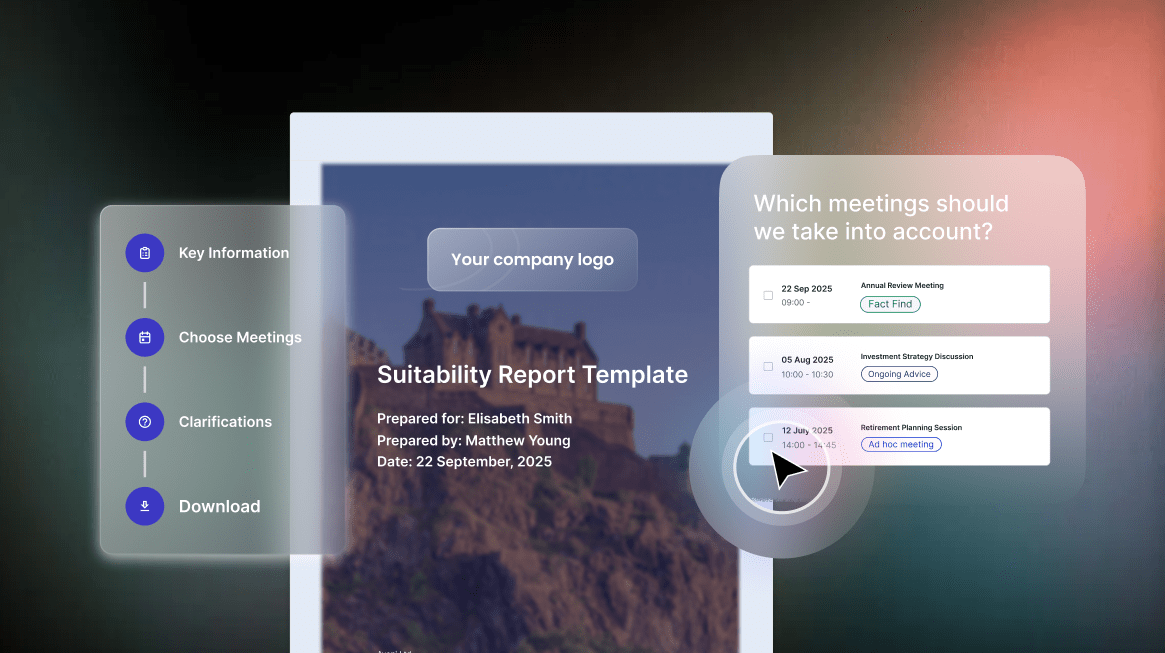

This sits at the centre of Aveni’s product development for 2026. Aveni Assist already supports advisers during client meetings by transcribing conversations, extracting fact-find data, generating suitability documentation, and flagging gaps before reports reach the customer. The platform creates a structured record of each interaction that links the AI’s outputs back to the inputs that informed them.

The next phase of Aveni’s roadmap, Agent Assure and Guidance Agents, extends that coverage upstream into the interaction itself. Aveni is designing the capability to monitor AI outputs against Consumer Duty standards as advisers work, flag recommendations that fall outside suitability parameters, prompt advisers when vulnerability indicators appear — including the softer signals that point to vulnerable customers who may not self-identify — and surface patterns when guidance quality drifts on a specific product or customer segment.

Think of this capability as Exhibit D in the trial: the Scene of the Crime. In a criminal investigation, the scene of the crime tells you what happened, where it happened, and what evidence the scene left behind. Real-time guidance monitoring provides the equivalent for AI-assisted advice. A live record of every interaction where guidance quality came under pressure, what the system caught, and what the system corrected before the customer ever saw it.

The critical distinction sits in the timing. Aveni Detect monitors every interaction and surfaces patterns after they occur. The next layer of Aveni’s platform operates during the interaction itself. Detect tells the firm what happened. The new layer aims to stop it from happening. Together, the two create a complete picture: real-time prevention paired with full-coverage monitoring.

The firms that answer Count IV successfully will not be the ones that noticed too late. They will be the ones that built the infrastructure to see what the AI was doing before the customer did.

Where does your firm stand?

The five governance gaps outlined in the AI on Trial series cover the questions regulators, boards, and senior managers are asking now. The guidance quality gap determines whether your AI is helping your advisers deliver good outcomes or replicating errors at scale.

See how Aveni helps firms monitor and improve guidance quality →

This article is part of Aveni’s AI on Trial: The Burden of Proof campaign series.

Read how Tier 1 banks should rebuild the Three Lines of Defence for AI agents →

Read the full series:

- AI Governance in UK Financial Services: The Accountability Framework

- Count I: SMCR Compliance and AI Agent Oversight

- Count II: AI Advice Without an Audit Trail

- Count III: Why Sampling 3% Falls Short of Consumer Duty

- Count IV: When AI Guidance Goes Wrong at Scale (this article)

- Count V: Why Financial Services AI Needs Domain-Specific Models

Sources cited in this article:

- FCA, Consumer Duty: Principle 12, cross-cutting rules, four outcomes (2023, updated ongoing)

- FCA, FG21/1: Guidance for firms on the fair treatment of vulnerable customers (February 2021)

- FCA, Review of Consumer Duty board reports: 180-firm review (2025)

- More2life, Vulnerability research: adviser identification capability

- Stanford HAI, AI Index Report 2026, Chapter 3: Responsible AI

- Artificial Analysis, AA-Omniscience hallucination benchmark (2026)

- Suzgun et al., KaBLE epistemic reliability benchmark (2025)

- Kaffee et al., INTIMA companionship behaviour benchmark (2025)

- MLCommons, AILuminate v1.0 safety benchmark (2025)

- McKinsey & Company / AI Index Responsible AI Survey (2025)

- Stanford CRFM, Foundation Model Transparency Index (2025)