Part of the AI on Trial: The Burden of Proof campaign series

Count I: Deploying AI agents you cannot supervise

The charge is straightforward. A firm deployed AI agents that make decisions affecting customers. No named individual can demonstrate how those agents are supervised, what decisions they made, or why. Under SMCR, that is not an operational gap. It is a personal liability.

SMCR conduct rules already apply to AI decisions

SMCR compliance has always been about one thing: making sure a named individual can demonstrate they took reasonable steps to control the business they are responsible for. That requirement does not change because the decisions are now being made by AI agents instead of people.

When the House of Commons Treasury Committee published its report on AI in financial services in January 2026, it included a statement from FCA Executive Director David Geale that should concentrate the mind of every senior manager in a regulated firm. Individuals within financial services firms, Geale told the Committee, are “on the hook” for harm caused to consumers through AI.

That phrase deserves to be read slowly. Not firms. Individuals. Not at some point in the future when new rules arrive. Now, under existing rules.

The regime Geale was referring to is SMCR, the Senior Managers and Certification Regime. It has been in force across FCA-regulated firms for years. And the FCA has been clear that its expectations do not change because a decision was made by an algorithm instead of a person.

Senior Manager Conduct Rule 1 requires you to take reasonable steps to ensure the business for which you are responsible is controlled effectively. Conduct Rule 2 requires you to take reasonable steps to ensure that business complies with regulatory requirements. Conduct Rule 3 requires that any delegation of your responsibilities is to an appropriate person and that you oversee the discharge of that delegation effectively.

Read those rules in the context of AI agents that autonomously triage customer queries, draft suitability letters, flag vulnerability indicators, or generate investment recommendations. The question they raise is simple: what does “reasonable steps” look like when the system making decisions updates weekly, processes thousands of interactions a day, and operates in ways that are difficult to explain even to technical specialists?

Nobody has answered that question yet. And that uncertainty is itself a risk.

For a full breakdown of all five governance gaps firms face when deploying AI, see the complete framework → AI Governance in UK Financial Services: The Accountability Framework

What the FCA Mills Review means for AI agent governance

On 27 January 2026, the FCA launched the Mills Review, a long-term examination of how AI will reshape retail financial services. Led by Executive Director Sheldon Mills, the Review is looking at how existing regulatory frameworks, including Consumer Duty and SMCR, should apply as AI becomes embedded in how firms operate.

In his launch speech at the FCA’s Supercharged Sandbox Showcase, Mills put the accountability challenge directly:

“Accountability under the Senior Managers and Certification Regime still matters. But what does ‘reasonable steps’ look like when the model you rely on updates weekly, incorporates components you don’t directly control, or behaves differently as soon as new data arrives?”

That is not a hypothetical. It is a description of how most modern AI systems work. Foundation models are updated by their providers without notice to the firms that depend on them. Agentic AI systems chain together multiple models and data sources in ways that make it difficult to trace any single decision back to a single input. The gap between SMCR’s requirement for demonstrable oversight and the reality of how AI operates in production is widening.

Mills has indicated he will report to the FCA Board in summer 2026, with recommendations expected to lead to comprehensive guidance by the end of the year. The Treasury Committee has separately recommended that the FCA publish practical guidance on SMCR compliance accountability for AI harm by that same deadline.

Until that guidance arrives, the rules are clear even if the playbook is not. Senior managers are accountable. The question is whether they can demonstrate it.

Why human-in-the-loop AI oversight is no longer enough

The Bank of England’s February 2026 roundtables with regulated firms surfaced a problem that compliance teams across the industry already know: the way firms have traditionally validated AI models is becoming unworkable.

Several participants told the Bank that the conventional approach to model risk management, built around understanding a model’s internal workings and how inputs map to outputs, is not effective for complex generative AI and agentic systems. The traditional emphasis on explainability at the model level does not translate to systems that produce different outputs from the same inputs depending on context, prompt history, and which version of the model was running at the time.

The concept of a “human in the loop” was also directly challenged by the rise of agentic AI. When a system is processing hundreds or thousands of decisions per day, a human reviewer cannot meaningfully evaluate each one. The model of a compliance officer reviewing a sample of outputs was designed for a world where most decisions were made by people and a small number were automated. That ratio has inverted in many firms. The oversight infrastructure has not kept pace.

The Bank of England’s summary noted that second-line risk functions continue to approach AI deployment cautiously, which in some firms creates bottlenecks rather than solutions. The result is a paradox: firms that are most careful about AI governance are also the ones most likely to slow down deployment without actually resolving the underlying oversight problem.

The challenge is real and the Baker McKenzie analysis of the Mills Review captures it precisely: the lack of explainability of many AI models conflicts with SMCR’s requirement for senior managers to demonstrate they understand and control risks within their areas of responsibility. The FCA has acknowledged this tension. It has not yet resolved it.

Five questions the FCA could ask about your AI agents today

If the FCA were to examine a firm’s AI agent oversight today, the questions would likely follow a predictable pattern. They would not be abstract. They would be specific, evidence-based, and focused on whether a named senior manager can demonstrate the oversight that SMCR compliance requires.

Who is accountable for this AI system? Under SMCR, accountability sits with the Senior Manager whose Statement of Responsibilities covers the relevant function. The FCA and PRA considered creating a dedicated Senior Management Function for AI and decided against it. That means accountability maps to existing roles: SMF24 (Chief Operations Function) for the integrity of technology systems, SMF4 (Chief Risk Function) for model risk and data quality, and potentially SMF16 (Compliance Oversight) depending on the use case. If a firm cannot point to a named individual whose responsibilities clearly cover AI deployment, that is a gap.

Can you show me the decision log for this agent? Every decision an AI agent makes on behalf of a customer should be traceable. What information did the agent have? What did it recommend or decide? Why? If the system generates a suitability letter, for example, a regulator should be able to trace the path from the client data inputs, through the model’s reasoning, to the output the customer received. If the firm can only provide the final output and not the reasoning chain, the audit trail is incomplete.

How do you know the model is performing within acceptable parameters today? Not last quarter. Not when it was validated. Today. AI models drift. Their performance changes as the data they process changes. A validation exercise conducted six months ago tells a regulator very little about what the model is doing now. Continuous monitoring, with defined thresholds and automated alerts, is the minimum expectation.

What happens when the model behaves unexpectedly? Incident management for AI needs to be specific and rehearsed, not generic. If an AI agent gives a customer harmful advice at 3am on a Saturday, what triggers? Who is notified? How quickly can the system be paused? How are affected customers identified and contacted? The firms that can answer these questions in detail are the ones that have taken SMCR compliance accountability seriously.

How do you oversee the third-party models your agent depends on? Most AI agents in financial services rely on foundation models built by a handful of providers. Those providers update their models regularly, sometimes without notice. Under SMCR Conduct Rule 3, delegation of responsibility must be to an appropriate party, and the senior manager must oversee the discharge of that delegation effectively. If your AI agent’s behaviour changes because OpenAI or Anthropic pushed an update, and you did not know about it until a customer complained, that is a delegation oversight failure.

These questions tie directly to structural accountability gaps that affect firms across financial services. See the full picture → AI Governance in UK Financial Services: The Accountability Framework

How SMCR compliance connects AI agent decisions to senior manager liability

Here is the chain that connects an AI agent’s decision to a senior manager’s personal liability under SMCR:

An AI agent makes a decision that affects a customer. Perhaps it generates a recommendation. Perhaps it triages a complaint. Perhaps it flags (or fails to flag) a vulnerability indicator.

That decision falls within the scope of a business area covered by a named senior manager’s Statement of Responsibilities.

If the decision causes harm, the FCA can take enforcement action against the senior manager personally if it can show that: (a) misconduct occurred within the firm, (b) the senior manager was responsible for the area in which the misconduct occurred, and (c) the senior manager did not take reasonable steps to prevent it.

The burden of proof in enforcement sits with the FCA. But the practical burden of demonstrating reasonable steps sits with the senior manager. If you cannot show what your AI did, why it did it, and how you were overseeing it, the FCA’s case becomes significantly easier to make.

The TSB IT migration enforcement action against former CIO Carlos Abarca is instructive. Abarca was fined for breaching PRA Senior Manager Conduct Rule 2 because he failed to ensure the migration was adequately risk assessed and tested, and did not obtain appropriate assurances from critical third-party providers. Swap “IT migration” for “AI agent deployment” and the parallels are obvious.

Building AI agent governance that satisfies SMCR compliance

The charge in Count I is deploying AI agents you cannot supervise. The defence is demonstrating that you can.

That means building what amounts to a governance layer that sits between the AI agent and the regulated environment in which it operates. A layer that records every decision the agent makes, the inputs it used, and the reasoning it applied. A layer that monitors the agent’s performance continuously against defined thresholds. A layer that allows a senior manager to see, in real time, what their AI is doing and whether it is operating within the boundaries the firm has set.

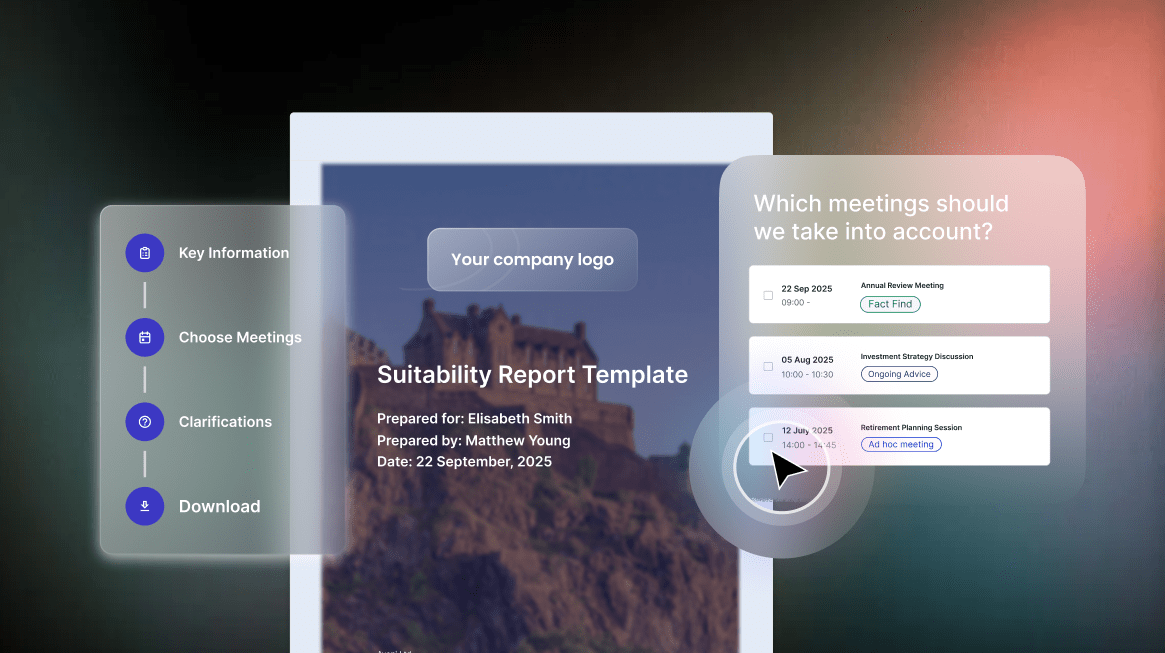

This is exactly the problem Aveni is building Agent Assure to solve.

Agent Assure is designed to provide the oversight infrastructure that SMCR demands but that most AI deployment frameworks lack. It will give senior managers a documented, auditable record of every AI agent interaction: what the agent did, what data it used, what it produced, and whether it operated within the governance parameters the firm defined. It will monitor agent performance against compliance thresholds continuously, not periodically. And it will provide the kind of evidence trail that a regulator would need to see.

Think of it as the character witness for the defence. In a courtroom, a character witness speaks to the defendant’s history of behaviour. Agent Assure is being built to provide the equivalent for AI agents: a complete, governed, documented history of every decision the agent made, demonstrating that the system operated responsibly and that the senior manager maintained meaningful oversight.

The firms that will be found “not guilty” of Count I are not the ones that avoided deploying AI. They are the ones that deployed it with the infrastructure to prove it was supervised, governed, and performing in customers’ interests.

SMCR compliance and AI: the 2026 regulatory timeline senior managers need to know

The regulatory timeline is clear. The Mills Review will report to the FCA Board in summer 2026. The Treasury Committee has called for comprehensive SMCR guidance on AI accountability by the end of the year. The EU AI Act’s high-risk provisions take effect in August 2026 and apply to UK firms that touch EU markets.

Waiting for guidance before building oversight infrastructure is a bet that nothing will go wrong in the meantime. Under SMCR, the senior manager who took that bet bears the personal consequences if it does not pay off.

The firms that are building their oversight infrastructure now are not doing so because a regulator told them to. They are doing it because they understand that demonstrable accountability is the condition that allows AI to be deployed at scale, with confidence, and with the full backing of the board.

The burden of proof is on you. The evidence starts with knowing what your AI is doing and being able to prove it.

This article is part of Aveni’s AI on Trial: The Burden of Proof campaign. Read the full AI Governance in UK Financial Services: The Accountability Framework for the complete picture of the five charges firms face.