Natural Language Processing (NLP) is a field of artificial intelligence that involves the use of algorithms, statistical models, and other techniques to analyse, understand, and generate human language. NLP has a wide range of applications within the financial services industry including risk management, sentiment analysis, and regulatory compliance. This technology is a powerful tool that enables financial services firms to gain valuable insights from unstructured data and improve their workflow.

NLP has come a long way since its early beginnings. Here’s a timeline of some major milestones its history:

1949-1950: Alan Turing published “Computing Machinery and Intelligence,” which proposed the Turing test as a measure of a machine’s ability to exhibit intelligent behaviour.

1950s: The earliest attempts at NLP were made using rule-based systems, which relied on a set of predefined rules to understand and generate human language.

1954: Georgetown-IBM experiment, was one of the first efforts to use computers to translate natural language. In this experiment, researchers used an IBM 701 to translate 60 Russian sentences into English.

1960s: The development of computer programs that could understand simple English commands, such as ELIZA, which was able to respond to simple questions and statements in a way that seemed human-like.

1969: The first NLP conference was held at MIT, where researchers from across the field gathered to discuss the latest advancements in the field.

1970s: Research in NLP continued to focus on rule-based systems, with an emphasis on syntactic and semantic analysis.

1980s: The field of NLP saw the emergence of statistical methods, which relied on large amounts of data to understand and generate natural language.

1990s: With the advent of powerful computers and the availability of large amounts of text data, researchers began to develop more advanced NLP systems, such as machine learning-based approaches.

1997: IBM’s Deep Blue defeated World chess champion Garry Kasparov, this was an important milestone in Artificial Intelligence and Machine learning.

2000s: The field of NLP began to see the development of more advanced systems, such as machine translation, speech recognition, and text-to-speech systems.

2010s: With the availability of large amounts of data and advances in machine learning, NLP saw a rapid advancement in the use of deep learning techniques, such as recurrent neural networks (RNNs) and transformer models.

2015– Google translate introduced neural machine translation to improve the quality of translations.

2016: OpenAI’s language model, GPT-1, was released. It was one of the first large-scale models trained using unsupervised learning, which allowed it to generate human-like text.

2018: Google’s AI language model called BERT (Bidirectional Encoder Representations from Transformers) that was able to improve the state-of-the-art in several natural language processing tasks.

2019: OpenAI’s GPT-2, an improvement to GPT-1, was released, which demonstrated the ability to generate highly coherent and realistic text.

2020: GPT-3 the third generation of GPT was introduced with even larger capacity and more realistic outputs, it was used to generate text, answer questions, and perform other language-based tasks in a way that often appears indistinguishable from human-generated text.

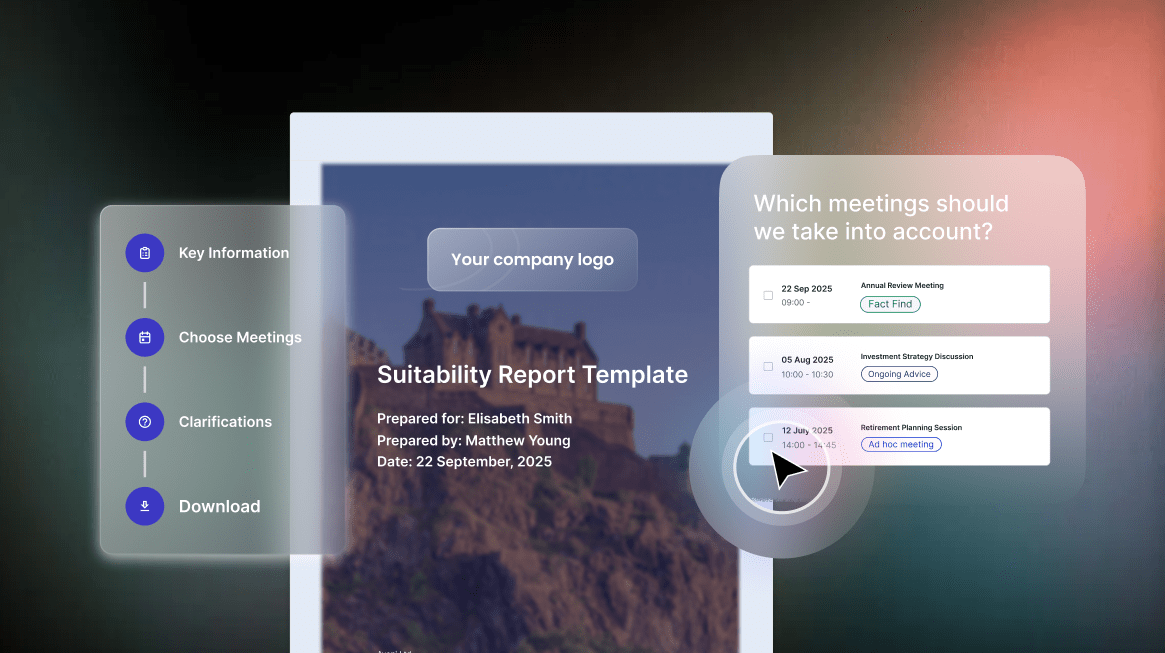

Aveni Detect uses Natural Language Processing and machine learning to automatically monitor and analyse all customer interactions, identifying and understanding risks that are important to your company. This leads to improved preventative control, enhanced data analytics, increased productivity, better agent performance, and revenue growth, making QA processes significantly faster and scaling oversight from 1% to 100%.

NLP has made significant progress since its early days, there are more advancements on the way and firms that adopt this technology will have a distinct advantage in the industry.

Connect with us on LinkedIn to stay updated on our latest news and developments.